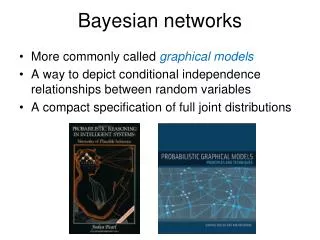

Bayesian Networks

Bayesian Networks. Introduction. A problem domain is modeled by a list of variables X 1 , …, X n Knowledge about the problem domain is represented by a joint probability P(X 1 , …, X n ). Introduction. Example: Alarm

Bayesian Networks

E N D

Presentation Transcript

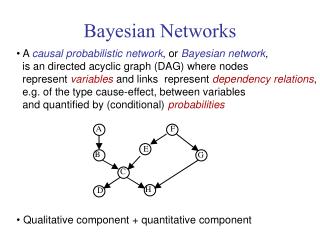

Introduction • A problem domain is modeled by a list of variables X1, …, Xn • Knowledge about the problem domain is represented by a joint probability P(X1, …, Xn)

Introduction Example: Alarm • The story: In LA burglary and earthquake are not uncommon. They both can cause alarm. In case of alarm, two neighbors John and Mary may call • Problem: Estimate the probability of a burglary based who has or has not called • Variables: Burglary (B), Earthquake (E), Alarm (A), JohnCalls (J), MaryCalls (M) • Knowledge required to solve the problem: P(B, E, A, J, M)

Introduction • What is the probability of burglary given that Mary called, P(B = y | M = y)? • Compute marginal probability:P(B , M) = E, A, J P(B, E, A, J, M) • Use the definition of conditional probability • Answer:

Introduction • Difficulty: Complexity in model construction and inference • In Alarm example: • 31 numbers needed • Computing P(B = y | M = y) takes 29 additions • In general • P(X1, … Xn) needs at least 2n – 1numbers to specify the joint probability • Exponential storage and inference

Conditional Independence • Overcome the problem of exponential size by exploiting conditional independence • The chain rule of probabilities:

Conditional Independence • Conditional independence in the problem domain:Domain usually allows to identify a subset pa(Xi) µ {X1, …, Xi – 1} such that given pa(Xi), Xi is independent of all variables in {X1, …, Xi - 1} \ pa{Xi}, i.e. P(Xi | X1, …, Xi – 1) = P(Xi | pa(Xi))Then

Conditional Independence • As a result, the joint probability P(X1, …, Xn) can be represented as the conditional probabilities P(Xi | pa(Xi)) • Example continued:P(B, E, A, J, M) =P(B)P(E|B)P(A|B,E)P(J|A,B,E)P(M|B,E,A,J) =P(B)P(E)P(A|B,E)P(J|A)P(M|A) • pa(B) = {}, pa(E) = {}, pa(A) = {B, E}, pa{J} = {A}, pa{M} = {A} • Conditional probability table specifies: P(B), P(E), P(A | B, E), P(M | A), P(J | A)

Conditional Independence As a result: • Model size reduced • Model construction easier • Inference easier

B E A J M Graphical Representation • To graphically represent the conditional independence relationships, construct a directed graph by drawing an arc from Xj to Xi iff Xj pa(Xi) • pa(B) = {}, pa(E) = {}, pa(A) = {B, E}, pa{J} = {A}, pa{M} = {A}

B E A J M Graphical Representation • We also attach the conditional probability table P(Xi | pa(Xi)) to node Xi • The result: Bayesian network P(B) P(E) P(A | B, E) P(J | A) P(M | A)

Formal Definition A Bayesian network is: • An acyclic directed graph (DAG), where • Each node represents a random variable • And is associated with the conditional probability of the node given its parents

B E A J M Intuition A BN can be understood as a DAG where arcs represent direct probability dependence • Absence of arc indicates probability independence: a variable is conditionally independent of all its nondescendants given its parents • From the graph: B ? E, J ? B | A, J ? E | A

Construction Procedure for constructing BN: • Choose a set of variables describing the application domain • Choose an ordering of variables • Start with empty network and add variables to the network one by one according to the ordering

Construction • To add i-th variable Xi: • Determine pa(Xi) of variables already in the network (X1, …, Xi – 1) such thatP(Xi | X1, …, Xi – 1) = P(Xi | pa(Xi))(domain knowledge is needed there) • Draw an arc from each variable in pa(Xi) to Xi

M J B M E J A A E B J M E A B Example • Order: B, E, A, J, M • pa(B)=pa(E)={}, pa(A)={B,E}, pa(J)={A}, pa{M}={A} • Order: M, J, A, B, E • pa{M}={}, pa{J}={M}, pa{A}={M,J}, pa{B}={A}, pa{E}={A,B} • Order: M, J, E, B, A • Fully connected graph

B M E J A E J M A B Construction Which variable order? • Naturalness of probability assessmentM, J, E, B, A is bad because of P(B | J, M, E) is not natural • Minimize number of arcsM, J, E, B, A is bad (too many arcs), the first is good • Use casual relationship: cause come before their effects M, J, E, B, A is bad because M and J are effects of A but come before A VS

Casual Bayesian Networks • A causal Bayesian network, or simply causal networks, is a Bayesian network whose arcs are interpreted as indicating cause-effect relationships • Build a causal network: • Choose a set of variables that describes the domain • Draw an arc to a variable from each of its direct causes (Domain knowledge required)

Example Smoking Visit Africa Lung Cancer Bronchitis Tuberculosis Tuberculosis orLung Cancer X-Ray Dyspnea

Causality is not a well understood concept. No widely accepted denition. No consensus on whether it is a property of the world or a concept in our minds Sometimes causal relations are obvious: Alarm causes people to leave building. Lung Cancer causes mass on chest X-ray. At other times, they are not that clear. Doctors believe smoking causes lung cancer but the tobacco industry has a different story: Casual BN Surgeon General (1964) S C Tobacco Industry * S C

Inference • Posterior queries to BN • We have observed the values of some variables • What are the posterior probability distributions of other variables? • Example: Both John and Mary reported alarm • What is the probability of burglary P(B|J=y,M=y)?

Inference • General form of query P(Q | E = e) = ? • Q is a list of query variables • E is a list of evidence variables • e denotes observed variables

Inference Types • Diagnostic inference: P(B | M = y) • Predictive/Casual Inference: P(M | B = y) • Intercasual inference (between causes of a common effect) P(B | A = y, E = y) • Mixed inference (combining two or more above) P(A | J = y, E = y) (diagnostic and casual) • All the types are handled in the same way

Naïve Inference Naïve algorithm for solving P(Q|E = e) in BN • Get probability distribution P(X) over all variables X by multiplying conditional probabilities • BN structure is not used, for many variables the algorithm is not practical • Generally exact inference is NP-hard

Inference • Though generally exact inference is NP-hard, in some cases the problem is tractable, e.g. if BN has a (poly)-tree structure efficient algorithm exists(a poly tree is a directed acyclic graph in which no two nodes have more than one path between them) • Another practical approach: Stochastic Simulation

A general sampling algorithm • For i = 1 to n • Find parents of Xi (Xp(i, 1), …, Xp(i, n) ) • Recall the values that those parents where randomly given • Look up the table for P(Xi | Xp(i, 1)= xp(i, 1), …, Xp(i, n)= xp(i, n) ) • Randomly set xi according to this probability

Stochastic Simulation • We want to know P(Q = q| E = e) • Do a lot of random samplings and count • Nc: Num. samples in which E = e • Ns: Num. samples in which Q = q and E = e • N: number of random samples • If N is big enough • Nc / N is a good estimate of P(E = e) • Ns / N is a good estimate of P(Q = q, E = e) • Ns / Nc is then a good estimate of P(Q = q | E = e)

Parameter Learning X2 X1 • Example: • given a BN structure • A dataset • Estimate conditional probabilities P(Xi | pa(Xi)) X4 X3 X5 ? means missing values

Parameter Learning • We consider cases with full data • Use maximum likelihood (ML) algorithm and bayesian estimation • Mode of learning: • Sequential learning • Batch learning • Bayesian estimation is suitable both for sequential and batch learning • ML is suitable only for batch learning

ML in BN with Complete Data • n variables X1, …, Xn • Number of states of Xi: ri = |Xi| • Number of configurations of parents of Xi: qi = |pa(Xi)| • Parameters to be estimated: ijk=P(Xi = j | pa(Xi) = k), i = 1, …, n; j = 1, …, ri; k = 1, …, qi

X2 X1 X3 ML in BN with Complete Data Example: consider a BN. Assume all variables are binary taking values 1, 2. ijk=P(Xi = j | pa(Xi) = k) Number of parents configuration

ML in BN with Complete Data • A complete case: Dl is a vector of values, one for each variable (all data is known).Example: Dl = (X1 = 1, X2 = 2, X3 = 2) • Given: A set of complete cases: D = {D1, …, Dm} • Find: the ML estimate of the parameters

X2 X1 X3 ML in BN with Complete Data • Loglikelihood:l( | D) = log L( | D) = log P(D | ) = log l P(Dl | ) = l log P(Dl | ) • The term log P(Dl | ): • D4 = (1, 2, 2) log P(D4 | ) = log P(X1 = 1, X2 = 2, X3 = 2 | ) = log P(X1=1 | ) P(X2=2 | ) P(X3=2 | X1=1, X2=2, )= log 111 + log 221 + log 322 • Recall: ={111,121,211,221,311,312,313,314,321,322,323, 324}

X2 X1 X3 ML in BN with Complete Data • Define the characteristic function of Dl: • When l = 4, D4 = {1, 2, 2}(1,1,1:D4)= (2,2,1:D4)= (3,2,2:D4)=1,(i, j, k: D4) = 0 for all other i, j, k • So, log P(D4 | ) = ijk(i, j, k: D4) log ijk • In general, log P(Dl | ) = ijk(i, j, k: Dl) log ijk

ML in BN with Complete Data • Define: mijk = l(i, j, k: Dl)the number of data cases when Xi = j and pa(Xi) = k • Then l( | D) = l log P(Dl | ) = li, j, k(i, j, k : Dl) log ijk=i, j, kl(i, j, k : Dl) log ijk=i, j, k mijk log ijk =i,kj mijk log ijk

ML in BN with Complete Data • We want to find:argmax l(| D) = argmax i,kj mijk log ijk ijk • Assume that ijk = P(Xi = j | pa(Xi) = k) is not related to i’j’k’ provided that i i’ OR k k’ • Consequently we can maximize separately each term in the summation i, k[…] argmax j mijk log ijkijk

ML in BN with Complete Data • As a result we have: • In words, the ML estimate for ijk = P( Xi = j | pa(Xi) = k) isnumber of cases where Xi=j and pa(Xi) = k number of cases where pa(Xi) = k

More to do with BN • Learning parameters with some values missing • Learning the structure of BN from training data • Many more…

References • Pearl, Judea, Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference, Morgan Kaufmann, San Mateo, CA, 1988. • Heckerman, David, "A Tutorial on Learning with Bayesian Networks," Technical Report MSR-TR-95-06, Microsoft Research, 1995. • www.ai.mit.edu/~murphyk/Software • http://www.cs.ubc.ca/~murphyk/Bayes/bnintro.html • R. G. Cowell, A. P. Dawid, S. L. Lauritzen and D. J. Spiegelhalter. "Probabilistic Networks and Expert Systems". Springer-Verlag. 1999. • http://www.ets.org/research/conferences/almond2004.html#software