Advanced Machine Learning: Implementing SMO for Document Classification

Join this comprehensive course on Sequential Minimal Optimization (SMO) in the context of advanced machine learning. Learn to implement the SMO algorithm to classify documents into two categories: baseball and hockey. The course includes data preprocessing, feature selection using TF-IDF values, and splitting datasets for cross-validation. You'll explore KKT conditions, kernel functions, and heuristics for updating model parameters. Gain practical skills in precision, recall, and F1 score calculations. Perfect for aspiring data scientists and machine learning practitioners.

Advanced Machine Learning: Implementing SMO for Document Classification

E N D

Presentation Transcript

Sequential Minimal OptimizationAdvanced Machine Learning Course 2012 Fall SemesterTsinghua University

Goal • Implement SMO algorithm to classify the given set of documents as one of two classes "+1 or -1”. Xi : N dimension vector Class -1 m Class +1

SMO implementation We see the main parts in the implementation aspect of SMO. • SMO overview • Data Processing • KKT conditions • Learned function • Kernel • Heuristics to find alpha2 • Updating w and b( for new lagrange values) • Testing • Submission

Data Preprocessing • The dataset contains two newsgroups, one is baseball and the other is hockey. • For each document(feature selection), you can simply select the top 50 words(50 features)with highest tf-idfvalues( see Ref 4 ). Of course, you can do advanced preprocessing like stop word removal, word stemming, or define specific features by yourself. • An example feature file is given : • +1 1:0 2:6.87475407647932 3:3.58860800796358 4:0 22:7.2725475021777 30:0.228447564110819 49:2.1380940541094 56:3.84284214634782 90:2.83603740693284 114:8.60474895145252 131:2.41152410385398 • -1 1:0 4:0 30:0.228447564110819 78:0.915163336038847 116:2.4241825007259 304:3.47309512084174 348:13.941644886054 384:1.46427114637585 626:2.85545549278994 650:3.003091491596 • where each line "[label] [keyword1]:[value1] [keyword2]:[value2]… " represents a document • Label(+1, -1) is the classification of the document; • keyword_i is the global sequence number of a word in the whole dataset; • value_i is the tf-idf of the word appearing in the document. • The goal of data preprocessing: • Generate a feature file for the whole dataset, and then split it into five equal set of files say s1 to s5 for 5-fold Cross-validation.

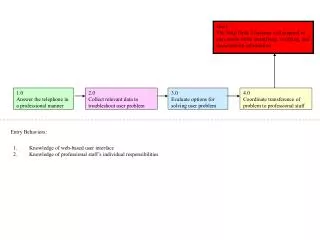

Learning & Test Processing • Input: feature files s1 to s5 • Steps: • Set i=1, take si as test set and others as training set • 2) Take the training set and learn the SMO (w and b for the data points) and store the learnt weights. • 3) Take the test set and using the weights learnt from Step 2, classify the documents as hockey or baseball. • 4) Calculate Precision, Recall and F1 score. • 5) i++ , Repeat Step 1, 2, and 3 until i> 5

Objective Function • The lecture in class showed us, we can: • Solve the dual more efficiently (fewer unknowns) • Add parameter C to allow some misclassifications • Replace xiTxj by more more general kernel term

Intuitive Introduction to SMO • SMO is essentially doing same thing with Perceptron learning algorithm • find a linear separator by adjusting weights on misclassified examples • Unlike perceptron, SMO has to maintain constraint. • Therefore, when SMO adjusts weight of one alpha example, it must alsoadjust weight of another alpha. Size of alpha is the size of training examples

SMO Algorithm • Input: C(say 0.5), kernel(linear), error cache, epsilon(tolerance) • Initializeb, w and all ’s to 0 • Repeat until KKT satisfied (to within epsilon): • Find an example ex1that violates KKT (prefer unbound examples (0<αi<C )here, choose randomly among those) • Choose a second example ex2. # Prefer one to maximize step size (in practice, faster to just maximize |E1 – E2|). # If that fails to result in change, randomly choose unbound example. # If that fails, randomly choose example. If that fails, re-choose ex1. • Update α1 and α2 in one step • Compute new threshold b

Karush-Kuhn-Tucker (KKT) Conditions • It is necessary and sufficient for a solution to our objective that all ’s satisfy the following: • An is 0 iff that example is correctly labeled with room to spare • An is C iff that example is incorrectly labeled or in the margin • An is properly between 0 and C (is “unbound”) iff that example is “barely” correctly labeled (is a support vector) • We just check KKT to within some small epsilon, typically 10-3 Unbound

Equality constraint ( cause to lie on diagonal line ) Inequality constraint ( causes lagrange multipliers to lie in the box )

Notations • SMO spends most of the time on adjusting the alphas of the non-boundary examples, so error cache is maintained for them. error_cache -> collection to hold the error of each training examples. • i1 and i2 are the indexes corresponding to ex1 and ex2. • Variables associated with i1 ends with 1 ( say alpha1 or alph1) and associated with i2 ends with 2( say alpha2 or alph2). • w – global weights - > size of unique number of words from given data set. • xi is ithtraining example. • eta - Objective function • a2 is new alpha2 value. • L & H -> Lower and upper range used to compute the feasibility range of new alpha a2(because new alpha are found on derivatives or objective function).

wxk-b -> learned_func Example: Error between predicted and actual

Kernel Common features between i1 and i2

Main Function Given the first alpha, examineExample(i1) first checks if it violates the KKT condition by more than tolerance and if not, jointly optimize two alphas by calling function takestep(i1,i2)

Heuristics to choose alpha2 Choose alpha2 such that (E1-E2) is maximized.

Feasibility range of alph2 Even before finding new alpha2 value, we have to find of feasibility range (L and H) value. Refer page number 11 of Reference 3 for the derivation regarding this.

New a2 (new alpha2) Objective function (page 9-11 of Reference 3 for derivation) Definite In indefinite case, SMO will move the Lagrange multipliers to the end point that has the lowest value of the objective function. Evaluated at each end(L and H). Read page 8 of Ref 2. Indefinite Lobj and Hobj – page 22 of Ref 3

Updating threshold b New b is calculated every time so that KKT fullfilled for both alphas. Updating the threshold with either of new alph1 or new alph2 non-boundary. If both of them not-boundary then average b1 and b2 (b1 is valid because it forces x1 to give y1 output) Refer page 9 of Reference 2.

Updating error cache using new lagrange multipliers Change in alph1 and alpha2 Change in b 1) Error cache of all other non-bound training examples are updated. 2) Error cache of alph1 and alph2 are updated to zero (since we already optimized together)

Updating w t1 and t2 -> Change in alpha1 and alpha2 respectively Global w w.r.t ex1 and ex2 should be updated.

SVM prediction with w and b • Now we have w and b calculated. For new xi , svm_prediction -> wxi-b

Precision & Recall Precision is the probability that a (randomly selected) retrieved document is relevant. Recall is the probability that a (randomly selected) relevant document is retrieved in a search. F1 Score

Points • eps too smaller value(more accuracy) may sometimes force SMO in non-exit loop. • After each alpha1 and alpha2 optimized, error cache of these two examples should be set to zero, and all other non-bound examples should be updated. • At each optimization step, w and b should be updated.

Reference Code • Pseudo-code • Reference 2 • C++ code • Reference 3

Submission • Implementation: Refer complete algorithm (for implementation) : http://research.microsoft.com/~jplatt/smoTR.pdf • Report Precision, Recall, F1 measure • Report time cost • Submit as .tar file, including 1 ) Source Code with necessary comments, including • Data preprocessing • SMO training, testing and evaluation 2) ReadMe.txt explaining • How to extract features for the dataset, try for different C values and explain the results. • Explain main functions, e.g., select alpha1 and alpha2, update alpha1 and alpha2, update w and b. • How to run your code, from data preprocessing to training/testing/evaluation, step by step. • What’s the final average precision, recall and F1 Score and time cost.

References • SVMby Sequential Minimal Optimization (SMO). Algorithm by John Platt. Lecture by David Page. pages.cs.wisc.edu/~dpage/cs760/MLlectureSMO.ppt • Platt(1998):http://research.microsoft.com/~jplatt/smoTR.pdf • Sequential Minimal Optimization for SVM. www.cs.iastate.edu/~honavar/smo-svm.pdf • http://en.wikipedia.org/wiki/Tf-idf • http://www.codeforge.com/read/156089/README.txt__html java code for SMO