Logical Bayesian Networks

Logical Bayesian Networks. A knowledge representation view on Probabilistic Logical Models. Daan Fierens, Hendrik Blockeel, Jan Ramon, Maurice Bruynooghe Katholieke Universiteit Leuven, Belgium. THIS TALK. learning best known most developed. Probabilistic Logical Models.

Logical Bayesian Networks

E N D

Presentation Transcript

Logical Bayesian Networks A knowledge representation view on Probabilistic Logical Models Daan Fierens, Hendrik Blockeel, Jan Ramon, Maurice Bruynooghe Katholieke Universiteit Leuven, Belgium

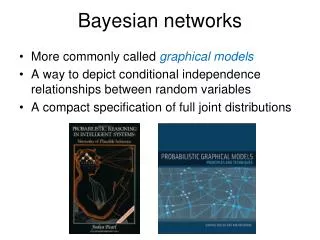

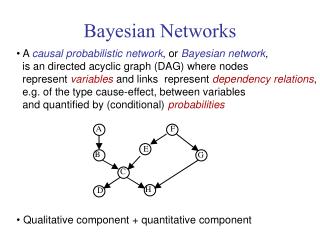

THIS TALK • learning • best known • most developed Probabilistic Logical Models • Variety of PLMs: • Origin in Bayesian Networks (Knowledge Based Model Construction) • Probabilistic Relational Models • Bayesian Logic Programs • CLP(BN) • … • Origin in Logic Programming • PRISM • Stochastic Logic Programs • …

Combining PRMs and BLPs • PRMs: • + Easy to understand, intuitive • - Somewhat restricted (as compared to BLPs) • BLPs: • + More general, expressive • - Not always intuitive • Combine strengths of both models in one model ? • We propose Logical Bayesian Networks (PRMs+BLPs)

Overview of this Talk • Example • Probabilistic Relational Models • Bayesian Logic Programs • Combining PRMs and BLPs: Why and How ? • Logical Bayesian Networks

Example [ Koller et al.] • University: • students (IQ) + courses (rating) • students take courses (grade) • grade IQ • rating sum of IQ’s • Specific situation: • jeff takes ai, pete and rick take lp, no student takes db

rating(db) rating(ai) rating(lp) iq(jeff) iq(pete) iq(rick) grade(jeff,ai) grade(rick,lp) grade(pete,lp) Bayesian Network-structure

Course Student iq rating Takes grade PRMs [Koller et al.] • PRM: relational schema,dependency structure (+ aggregates + CPDs) CPT aggr + CPT

PRMs (2) • Semantics: PRM induces a Bayesian network on the relational skeleton

rating(db) rating(ai) rating(lp) iq(jeff) iq(pete) iq(rick) grade(jeff,ai) grade(rick,lp) grade(pete,lp) PRMs - BN-structure (3)

PRMs: Pros & Cons (4) • Easy to understand and interpret • Expressiveness as compared to BLPs, … : • Not possible to combine selection and aggregation [Blockeel & Bruynooghe, SRL-workshop ‘03] • E.g. extra attribute sex for students • rating sum of IQ’s for female students • Specification of logical background knowledge ? • (no functors, constants)

BLPs [Kersting, De Raedt] • Definite Logic Programs + Bayesian networks • Bayesian predicates (range) • Random var = ground Bayesian atom: iq(jeff) • BLP = clauses with CPT rating(C) | iq(S), takes(S,C). CPT + combining rule (can be anything) Range: {low,high} • Semantics: Bayesian network • random variables = ground atoms in LH-model • dependencies grounding of the BLP

BLPs (2) rating(C) | iq(S), takes(S,C). rating(C) | course(C). grade(S,C) | iq(S), takes(S,C). iq(S) | student(S). • BLPs do not distinguish probabilistic and logical/certain/structural knowledge • Influence on readability of clauses • What about the resulting Bayesian network ? student(pete)., …, course(lp)., …, takes(rick,lp).

takes(jeff,ai) student(jeff) CPD iq(jeff) grade(jeff,ai) student(jeff) iq(jeff) true distribution for iq/1 false ? BLPs - BN-structure (3) • Fragment:

takes(jeff,ai) student(jeff) iq(jeff) takes(jeff,ai) grade(jeff,ai) grade(jeff,ai) true distribution for grade/2, function of iq(jeff) CPD false ? BLPs - BN-structure (3) • Fragment:

BLPs: Pros & Cons (4) • High expressiveness: • Definite Logic Programs (functors, …) • Can combine selection and aggregation (combining rules) • Not always easy to interpret • the clauses • the resulting Bayesian network

Combining PRMs and BLPs • Why ? • 1 model = intuitive + high expressiveness • How ? • Expressiveness: ( BLPs) • Logic Programming • Intuitive: ( PRMs) • Distinguish probabilistic and logical/certain knowledge • Distinct components (PRMs: schema determines random variables / dependency structure) • (General vs Specific knowledge)

Logical Bayesian Networks • Probabilistic predicates (variables,range) vs Logical predicates • LBN - components: • Relational schema V • Dependency Structure DE • CPDs+ aggregates DI • Relational skeleton Logic Program Pl • Description of DoD / deterministic info

Logical Bayesian Networks • Semantics: • LBN induces a Bayesian network on the variables determined by Pl and V

Normal Logic Program Pl student(jeff). course(ai). takes(jeff,ai). student(pete). course(lp). takes(pete,lp). student(rick). course(db). takes(rick,lp). • Semantics: well-founded model WFM(Pl)(when no negation: least Herbrand model)

V iq(S) <= student(S). rating(C) <= course(C). grade(S,C) <= takes(S,C). • Semantics: determines random variables • each ground probabilistic atom in WFM(Pl V) is random variable • iq(jeff), …, rating(lp), …,grade(rick,lp) • non-monotonic negation (not in PRMs, BLPs) • grade(S,C) <= takes(S,C), not(absent(S,C)).

DE grade(S,C) | iq(S). rating(C) | iq(S) <- takes(S,C). • Semantics: determines conditional dependencies • ground instances with context in WFM(Pl) • e.g. rating(lp) | iq(pete) <- takes(pete,lp) • e.g. rating(lp) | iq(rick) <- takes(rick,lp)

V + DE iq(S) <= student(S). rating(C) <= course(C). grade(S,C) <= takes(S,C) grade(S,C) | iq(S). rating(C) | iq(S) <- takes(S,C).

rating(db) rating(ai) rating(lp) iq(jeff) iq(pete) iq(rick) grade(jeff,ai) grade(rick,lp) grade(pete,lp) LBNs - BN-structure

DI • The quantitative component • ~ in PRMs: aggregates + CPDs • ~ in BLPs: CPDs + combining rules • For each probabilistic predicate p a logical CPD • = function with • input: set of pairs (Ground prob atom,Value) • output: probability distribution for p • Semantics: determines the CPDs for all variables about p

0.5 / 0.5 0.7 / 0.3 DI (2) • e.g. for rating/1 (inputs are about iq/1) If (SUM(iq(S),Val) Val) > 1000 Then 0.7 high / 0.3 low Else 0.5 high / 0.5 low • Can be written as logical probability tree (TILDE) sum(Val, iq(S,Val), Sum), Sum > 1000 • cf [Van Assche et al., SRL-workshop ‘04]

DI (3) • DI determines the CPDs • e.g. CPD for rating(lp) = function of iq(pete) and iq(rick) • Entry in CPD for iq(pete)=100 and iq(rick)=120 ? • Apply logical CPD for rating/1 to {(iq(pete),100),(iq(rick),120)} • Result: probab. distribution 0.5 high / 0.5 low If (SUM(iq(S),Val) Val) > 1000 Then 0.7 high / 0.3 low Else 0.5 high / 0.5 low

0.5 / 0.5 0.7 / 0.3 DI (4) • Combine selection and aggregation? • e.g. rating sum of IQ’s for female students sum(Val, (iq(S,Val), sex(S,fem)), Sum), Sum > 1000 • again cf [Van Assche et al., SRL-workshop ‘04]

LBNs: Pros & Cons / Conclusion • Qualitative part (V + DE): easy to interpret • High expressiveness • Normal Logic Programs (non-monotonic negation, functors, …) • Combining selection and aggregation • Comes at a cost: • Quantitative part (DI) is more difficult (than for PRMs)

Future Work: Learning LBNs • Learning algorithms for PRMs & BLPs • On high level: appropriate mix will probably do for LBNs • LBNs PRMs: learning quantitative component is more difficult for LBNs • LBNs BLPs: • LBNs have separation V vs DE • LBNs: distinction probabilistic predicates vs logical predicates = bias (but also used by BLPs in practice)