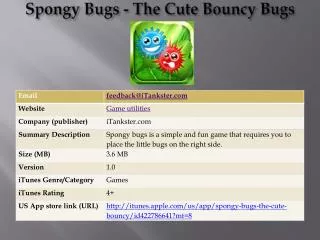

Bugs

Bugs. Strategy. Remember, these models are inherently complex You don’t want to make them needlessly complex Start with lm/glm Estimate in lmer Move to Bugs You can do the first two. Bayesian Inference and priors. The difficulty is in estimating the two levels of model simultaneously.

Bugs

E N D

Presentation Transcript

Strategy • Remember, these models are inherently complex • You don’t want to make them needlessly complex • Start with lm/glm • Estimate in lmer • Move to Bugs • You can do the first two

Bayesian Inference and priors • The difficulty is in estimating the two levels of model simultaneously. • The Bayesian technique is to put priors on the group level effects • Technically Bayesian estimation requires priors on everything. • Regression uses uninformative priors • Prior on coefficient is a uniform (-∞, ∞) • Prior on error variance is a uniform (0, ∞) • Sets the possible range, but places little probability mass on each point

Priors • GLM models place uninformative uniform priors on the parameters, where the scale is determined by acceptable values. • Varying intercept model • The distribution of the intercept is a prior distribution. • The parameters of this distribution (the mean and variance) are hyperparameters to be estiamted

Priors • All parameters need priors, however • Typically set these to an uninformative uniform • Combine them all into a single probability function • Which is the product of the densities of the individual prior to form a joint PDF

Priors • Varying slope • Much more complicated • Want the priors to recognize the connection between the random terms in the model • Use a multivariate normal distribution for the error variance/covariance matrix • Independent uniforms on the hyperparameters • Adding predictors complicates things

Priors • Add a z to the random intercept model • This changes the priors needed • Usually just use an independent uninformative uniform • Call the first equation the data model or likelihood • Second equation is the group level model or prior model

Priors • The group terms have different priors than the likelihood terms • For group j, the intercept has a prior with mean: • And standard deviation τ. • Note this prior itself depends on the gamma terms and the tau—which also have priors. • The priors for the different j terms are different because the z term is different.

Priors • Different means requires different distributions. • Technically, this means they are not “exchangeable” • A sequence of random variables is exchangeable iff a re-sequencing of the variables does not change the joint pdf of the sequence • Essentially, the sequencing does not matter, the variables are iid • Different means says not iid

Priors • Alternative: exchangeable prior distribution on the u’s. • The intercepts are determined by group level predictors z and the u’s which are assigned one prior. • Represents the possible values of u from a hypothetical population from which the J groups were sampled

Noninformative • Bayesian estimation allows you to combine the information in the data with other information. • Priors—summarize the information outside of the data • So, what is your prior about the coefficients? • Answer to this shapes answer to inference—goes into the posterior

Noninformative • These priors are designed to allow the data to have maximum influence over the posterior. • “Objective Bayesian” (Jim Berger) • Uses powerful machinery of Bayesian estimation without the subjective baggage • The key is to choose values that easily encompass (dwarf) the possible range of the values of the parameter • Too far is a bad thing though, because incredible small values are unstable

Fitting the models • This is the fun part • Radon data again (no, I don’t know why this is the default dataset). • R does not actually estimate the model • WinBUGS does. • But we don’t need to use WinBUGS directly because R will call it • This is a really nice part of R

Bugs • Keep in mind, Bugs needs a specification for every: • Data point • Group level parameter • Hyper parameter • Variance • YOU have to make these choices. There is no point and click • Specification means: • Prior • Distribution • Assignment • Temporary (link to higher assignment) • Data

Bugs code # Bugs code for multilevel model for radon # with bsmt as an individual predictor # varying-intercept model model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) for (j in 1:J){ a[j] ~ dnorm (mu.a, tau.a) } mu.a ~ dnorm (0, .0001) tau.a <- pow(sigma.a, -2) sigma.a ~ dunif (0, 100) }

model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } This begins the model. Everything until the final } is the model This loop defines y for each observation i Normal mean y.hat precision tau.y y.hat is defined as constant (a) indexed by county i and the product of b and the variable x. a and b will be estiamted Bugs code

Bugs note • We cannot write the model in one line as: • y[i] ~ dnorm(a[county[i]] + b*x[i], tau.y) • It is too complex • Need to break it into two pieces, wehre we first define y[i] as having some mean we haven’t defined yet. Then define the mean.

b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) Priors b is a normal, mean 0, and precision 0.0001 Precision is 1/variance Tau.y is defined as sigma.y raised to the -2 power Pow raises the first element to the power of the second element Sigma.y is distributed uniform, 0-100 Bugs code

for (j in 1:J){ a[j] ~ dnorm(mu.a, tau.a) } mu.a ~ dnorm (0, .0001) tau.a <- pow(sigma.a, -2) sigma.a ~ dunif (0, 100) } A loop defining the priors on the a[j] terms. They are each distributed normally with mean mu.a and tau.a mu.a has a normal prior, mean 0 and precision 0.0001 Tau.a is defined as sigma.a raised to the -2 power Sigma.a has a uniform (0,100) prior. } ends the model Bugs code

Back to R • That file is saved as radon.1.bug • You need R to call Bugs and that file.

Calling Bugs code radon.data <- list ("n", "J", "x", "y", "county") radon.inits <- function (){ list (a=rnorm(J), b=rnorm(1), mu.a=rnorm(1), sigma.y=runif(1), sigma.a=runif(1)) } radon.parameters <- c ("a", "b", "mu.a", "sigma.y", "sigma.a") radon.1 <- bugs (radon.data, radon.inits, radon.parameters, "radon.1.bug", n.chains=3, n.iter=500) plot (radon.1) radon.1.noburnin <- bugs (radon.data, radon.inits, radon.parameters, "radon.1.bug", n.chains=3, n.iter=500, n.burnin=0)

radon.data <- list ("n", "J", "x", "y", "county") radon.inits <- function (){ list (a=rnorm(J), b=rnorm(1), mu.a=rnorm(1), sigma.y=runif(1), sigma.a=runif(1)) } Collect all of the things you are going to pass to Bugs into a single object. This is the data, indexing parameters (n and J) Create the initial values Remember MCMC models need initial values of the parameters to run a they are j draws from a random normal (as drawn by R) B is 1 draw from a random normal The sigma’s are drawn from a random uniform (0,1) This is not necessary (bugs will create but that can create problems) Calling Bugs code

Bugs note • Need to double check that everything in the .parameters line has a prior and a initial value. If not it will choke.

radon.parameters <- c ("a", "b", "mu.a", "sigma.y", "sigma.a") radon.1db <- bugs (radon.data, radon.inits, radon.parameters, "radon.1.bug", n.chains=3, n.iter=10, debug=TRUE) Defines the parameters to be estimated Defines object radon.1db Calls bugs Passes things Radon.data Radon.inits Radon.parameters n.chains—how many different (Markov) chains should be run N.iter defines the nubmer of iterations in the Monte Carlo Debug tells it we want to make sure it is ok. R cannot run again until WinBUGS is closed. R code

Bugs note • What are chains? • We are setting up a Markov chain that will, if it iterates long enough converge on the true joint posterior density. • A couple of potential problems • Autocorrelation • Influence of starting values • Initial solution: Multiple chains

Chains • Different starting values (different draws from the distributions) create different chains • Comparing helps isolate effect of initial value • Pooling helps minimize the autocorrelations

Burn in • We want to infer about the posterior and need the Monte Carlo to be draws from this posterior. • We will get there, but we do not start there • Need to discard all of the iterations before we converge • How tell convergence? Start by looking at the chains (run noburnin)

Iterations • Once we are in the posterior how many iterations do we need? • Start with a small number to make sure it runs • 1000 is generally enough to summarize results

More R code plot (radon.1) Print(radon.1) radon.1.noburnin <- bugs (radon.data, radon.inits, radon.parameters, "radon.1.bug", n.chains=3, n.iter=500, n.burnin=0)

plot (radon.1) print(radon.1) Displays the results of the run in a graph Results from the run First seven columns summarize the model parameters Rhat is the square root of the variance of the mixture of the chains, divided by the average within-chain variance 1 is great. <1.1 acceptable. It is a sign of how well the chains are mixing—compare this to 50 iterations. N.eff is the number of effective simulations—this accounts for the autocorrelation in the Monte Carlo part. 100 is usually acceptable We will come back to the stuff at the bottom Output

Results • That’s too much info • We should graph stuff • Easy to do in R • We created object radon.1 that has 750 simulated draws from the posteriors of all of the parameters we asked for. • We can use this to summarize the results however we want.

R code calling Bugs output attach.bugs (radon.1) a.multilevel <-rep(NA,J) for (j in 1:J){ a.multilevel[j] <-median(a[,j]) } b.multilevel <-median(b)

attach.bugs (radon.1) a.multilevel <-rep(NA,J) for (j in 1:J){ a.multilevel[j] <-median(a[,j]) } This is an attach command, but specific to bugs. Defines a.multilevel through a loop of the J items in the a output matrix from bugs. This is the median draw from the Monte Carlo of the posterior of a R code

b.multilevel <-median(b) Defines the object b.multilevel as the median draw of the b object passed back from Bugs. a is made up of the J intercepts. b is fixed across counties so there is only one b (though there are 250 draws from the posterior R code

Adding a group level predictor model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) for (j in 1:J){ a[j] ~ dnorm (a.hat[j], tau.a) a.hat[j] <- g.0 + g.1*u[j] } g.0 ~ dnorm (0, .0001) g.1 ~ dnorm (0, .0001) tau.a <- pow(sigma.a, -2) sigma.a ~ dunif (0, 100) }

model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) Look similar to before

for (j in 1:J){ a[j] ~ dnorm (a.hat[j], tau.a) a.hat[j] <- g.0 + g.1*u[j] } This is where the change comes Not that u[j] is included in the macro equation Different from lmer Size of u also different from size of x

g.0 ~ dnorm (0, .0001) g.1 ~ dnorm (0, .0001) tau.a <- pow(sigma.a, -2) sigma.a ~ dunif (0, 100) } Extension of earlier priors Not much new here either

Principles • There are several classes of objects and the differences matter • Data • These are the data and are specified by the data input in the bugs() call. • Modeled data: they have a “~”. Only the dv here • Unmodeled data: these are treated as fixed and have not distribution. X, u, n, J, county

Looping indices • These are the for loops • Missing data “NA” • Bugs will estimate these as if they are parameters • But if they are unmodeled bugs blows up • Need to pre-process them

Parameters • Modeled. These are assigned “informative” priors—they link toe the hyperparameters • a (which depend on a.hat and tau.a) • Unmodeled. These have noninformative priors (or unlinked priors). B, g.0, g.1, sigma.y, sigma.a • Derived quantities • These are defined deterministically (“<-”) • Y.hat, tau.y, a.hat, tau.a

Practical issues (an intro) • Convergence • How many chains for how long? • Step one is debug • Step two is short run (n.iter ~=500) • Check convergence • If converged stop • If close do a larger run (1000-2000) • If not even close, re-jigger • Don’t need many chains

Practical issues • Initial values • These need to be “reasonable” • Distinct • Usually specify a random draw

Complexities • Bugs can do really complicated things • We will do some of these soon • Nonlinear and non-additive is easy • Logic works the same as R • Interaction in Bugs code • y.hat[i]<-a[school[i]]+b[1]*x[i]+b[2]*x[i]*z[i]

complexities • Heteroskedasticity • Y[i]<-dnorm(y.hat[i], tau.y[T[group[i]+1}}) • For (k in 1:2){ • tau.y[k] <-pow(sigma.y[k], -2) • sigma.y[k]~dunif(0,100) • } • There are 2 groups and we want to estimate separate variances

Next time • Random intercepts • Multilevel logistic regression

Varying slope • What needs to change? model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) for (j in 1:J){ a[j] ~ dnorm (a.hat[j], tau.a) a.hat[j] <- mu.a } mu.a ~ dnorm (0, .0001) tau.a <- pow(sigma.a, -2) sigma.a ~ dunif (0, 100) }

Old code model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b*x[i] } New code model { for (i in 1:n){ y[i] ~ dnorm (y.hat[i], tau.y) y.hat[i] <- a[county[i]] + b[county[i]*x[i] } Adding the varying slope

Old code b ~ dnorm (0, .0001) tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) New code tau.y <- pow(sigma.y, -2) sigma.y ~ dunif (0, 100) Priors

Old code for (j in 1:J){ a[j] ~ dnorm (a.hat[j], tau.a) a.hat[j] <- mu.a } New code for (j in 1:J){ a[j] ~ dnorm (a.hat[j], tau.a) b[j] ~ dnorm (b.hat[j], tau.b) a.hat[j] <- mu.a b.hat[j] <- mu.b } Varying loop