Understanding the Central Limit Theorem and Conditional Expectations in Probability

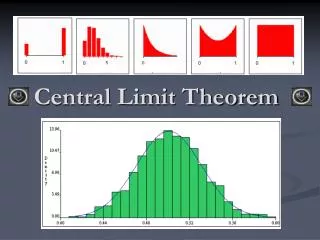

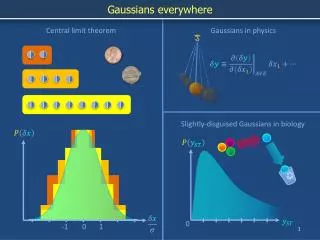

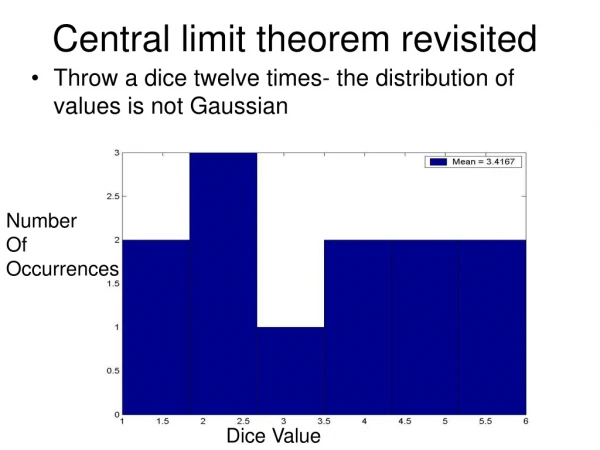

This text explores the Central Limit Theorem, illustrating how the sum of independent, identically distributed random variables approaches normality as sample size grows. It emphasizes how the mean and standard deviation of the combined distribution can be determined. Additionally, it discusses the concept of conditional expectation, specifically E(Y|A), and the importance of conditional probabilities in understanding random variables. Lastly, a practical example considers a particle's movement over time steps, calculating probabilities relevant to its position.

Understanding the Central Limit Theorem and Conditional Expectations in Probability

E N D

Presentation Transcript

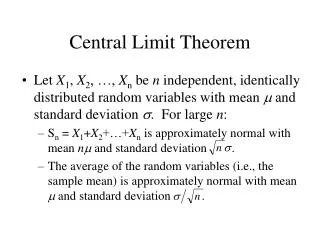

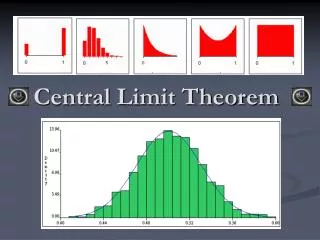

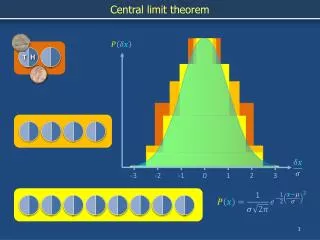

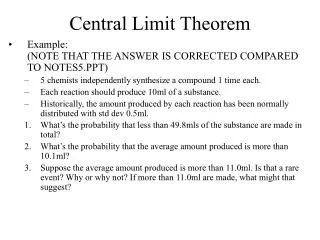

Central Limit Theorem • Let X1, X2, …, Xn be n independent, identically distributed random variables with mean m and standard deviation s. For large n: • Sn = X1+X2+…+Xn is approximately normal with mean nm and standard deviation . • The average of the random variables (i.e., the sample mean) is approximately normal with mean m and standard deviation .

0.3 0.5 0.2 Suppose at each time step a particle has probability 0.3 of moving 1 step to the left, probability 0.5 of moving 1 step to the right and probability 0.2 of staying where it is. Find the probability that after 10,000 time steps the particle is no more than 1000 steps to the right of its starting point.

Conditional Expectation Given an Event • The conditional expectation of a random variable Y given an event A, denoted E(Y|A), is the expectation of Y under the conditional probability distribution given A:

Rule of Average Conditional Expectations • For any random variable Y with finite expectation and any discrete random variable X, • Another way of writing the above is