What is a P2P system?

410 likes | 700 Vues

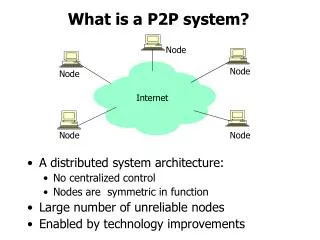

What is a P2P system?. Node. Node. Node. A distributed system architecture: No centralized control Nodes are symmetric in function Large number of unreliable nodes Enabled by technology improvements. Internet. Node. Node. How to build critical services?.

What is a P2P system?

E N D

Presentation Transcript

What is a P2P system? Node Node Node • A distributed system architecture: • No centralized control • Nodes are symmetric in function • Large number of unreliable nodes • Enabled by technology improvements Internet Node Node

How to build critical services? • Many critical services use Internet • Hospitals, government agencies, etc. • These services need to be robust • Node and communication failures • Load fluctuations (e.g., flash crowds) • Attacks (including DDoS)

The promise of P2P computing • Reliability: no central point of failure • Many replicas • Geographic distribution • High capacity through parallelism: • Many disks • Many network connections • Many CPUs • Automatic configuration • Useful in public and proprietary settings

Traditional distributed computing:client/server Server • Successful architecture, and will continue to be so • Tremendous engineering necessary to make server farms scalable and robust Client Client Internet Client Client

…. node node node The abstraction:Distributed hash table (DHT) (File sharing) Distributed application data get (key) put(key, data) (DHash) Distributed hash table lookup(key) node IP address (Chord) Lookup service • Application may be distributed over many nodes • DHT distributes data storage over many nodes

A DHT has a good interface • Put(key, value) and get(key) value • Simple interface! • API supports a wide range of applications • DHT imposes no structure/meaning on keys • Key/value pairs are persistent and global • Can store keys in other DHT values • And thus build complex data structures

A DHT makes a good shared infrastructure • Many applications can share one DHT service • Much as applications share the Internet • Eases deployment of new applications • Pools resources from many participants • Efficient due to statistical multiplexing • Fault-tolerant due to geographic distribution

Recent DHT-based projects • File sharing [CFS, OceanStore, PAST, Ivy, …] • Web cache [Squirrel, ..] • Archival/Backup store [HiveNet, Mojo, Pastiche] • Censor-resistant stores [Eternity, FreeNet,..] • DB query and indexing [PIER, …] • Event notification [Scribe] • Naming systems [ChordDNS, Twine, ..] • Communication primitives [I3, …] Common thread: data is location-independent

Roadmap • One application: CFS/DHash • One structured overlay: Chord • Alternatives: • Other solutions • Geometry and performance • The interface • Applications

CFS: Cooperative file sharing • DHT used as a robust block store • Client of DHT implements file system • Read-only: CFS, PAST • Read-write: OceanStore, Ivy File system block get (key) put (key, block) Distributed hash tables …. node node node

File representation:self-authenticating data File System key=995 431=SHA-1 144 = SHA-1 901= SHA-1 … 995: key=901 key=732 Signature key=431 key=795 “a.txt” ID=144 … (i-node block) … … (data) (root block) (directory blocks) Signed blocks: – Root blocks; Chord ID = H(publisher's public key) Unsigned blocks – Directory blocks, inode blocks, data blocks; – Chord ID = H(block contents)

DHT distributes blocks by hashing IDs Block 732 Block 705 Node B 995: key=901 key=732 Signature 247: key=407 key=992 key=705 Signature Node A Internet Block 407 Node C Node D Block 901 Block 992 • DHT replicates blocks for fault tolerance • DHT caches popular blocks for load balance

DHT implementation challenges • Scalable lookup • Balance load (flash crowds) • Handling failures • Coping with systems in flux • Network-awareness for performance • Robustness with untrusted participants • Programming abstraction • Heterogeneity • Anonymity • Indexing Goal: simple, provably-good algorithms

1. The lookup problem N2 N1 N3 Put (Key=sha-1(data), Value=data…) Internet ? Client Publisher Get(key=sha-1(data)) N4 N6 N5 • Get() is a lookup followed by check • Put() is a lookup followed by a store

Centralized lookup (Napster) N2 N1 SetLoc(“title”, N4) N3 Client DB N4 Publisher@ Lookup(“title”) Key=“title” Value=file data… N8 N9 N7 N6 Simple, but O(N) state and a single point of failure

Flooded queries (Gnutella) N2 N1 Lookup(“title”) N3 Client N4 Publisher@ Key=“title” Value=MP3 data… N6 N8 N7 N9 Robust, but worst case O(N) messages per lookup

K5 K20 N105 Circular ID space N32 N90 K80 N60 Algorithms based on routing • Map keys to nodes in a load-balanced way • Hash keys and nodes into a string of digit • Assign key to “closest” node • Forward a lookup for a key to a closer node • Join: insert node in ring Examples: CAN, Chord, Kademlia, Pastry, Tapestry, Viceroy, ….

Chord’s routing table: fingers ½ ¼ 1/8 1/16 1/32 1/64 1/128 N80

Lookups take O(log(N)) hops N5 N10 N110 K19 N20 N99 N32 Lookup(K19) N80 N60 • Lookup: route to closest predecessor

Can we do better? • Caching • Exploit flexibility at the geometry level • Iterative vs. recursive lookups

2. Balance load N5 K19 N10 N110 K19 N20 N99 N32 Lookup(K19) N80 N60 • Hash function balances keys over nodes • For popular keys, cache along the path

Why Caching Works Well N20 • Only O(log N) nodes have fingers pointing to N20 • This limits the single-block load on N20

K19 K19 K19 3. Handling failures: redundancy N5 N10 N110 N20 N99 N32 N40 N80 N60 • Each node knows IP addresses of next r nodes • Each key is replicated at next r nodes

Lookups find replicas N5 N10 N110 2. N20 1. 3. N99 Block 17 N40 4. RPCs: 1. Lookup step 2. Get successor list 3. Failed block fetch 4. Block fetch N50 N80 N68 N60 Lookup(BlockID=17)

First Live Successor Manages Replicas N5 N10 N110 N20 N99 Copy of 17 Block 17 N40 N50 N80 N68 N60 • Node can locally determine that it is the first live successor

4. Systems in flux • Lookup takes log(N) hops If system is stable But, system is never stable! • What we desire are theorems of the type: • In the almost-ideal state, ….log(N)… • System maintains almost-ideal state as nodes join and fail

Half-life [Liben-Nowell 2002] N new nodes join N nodes • Doubling time: time for N joins • Halfing time: time for N/2 old nodes to fail • Half life: MIN(doubling-time, halfing-time) N/2 old nodes leave

Applying half life • For any node u in any P2P network: If u wishes to stay connected with high probability, then, on average, u must be notified about (log N) new nodes per half life • And so on, …

5. Optimize routing to reduce latency N20 • Nodes close on ring, but far away in Internet • Goal: put nodes in routing table that result in few hops and low latency N40 N41 N80

“close” metric impacts choice of nearby nodes N06 USA N105 • Chord’s numerical close and (original) routing table restrict choice • Should new nodes be able to choose their own ID • Other allows for more choice (e.g., prefix based, XOR) USA K104 Far east N103 N32 Europe N60 USA

6. Malicious participants • Attacker denies service • Flood DHT with data • Attacker returns incorrect data [detectable] • Self-authenticating data • Attacker denies data exists [liveness] • Bad node is responsible, but says no • Bad node supplies incorrect routing info • Bad nodes make a bad ring, and good node joins it • Basic approach: use redundancy

Sybil attack [Douceur 02] N5 • Attacker creates multiple identities • Attacker controls enough nodes to foil the redundancy N10 N110 N20 N99 N32 N40 N80 N60 • Need a way to control creation of node IDs

One solution: secure node IDs • Every node has a public key • Certificate authority signs public key of good nodes • Every node signs and verifies messages • Quotas per publisher

Another solution:exploit practical byzantine protocols N06 N105 N • A core set of servers is pre-configured with keys and perform admission control [OceanStore] • The servers achieve consensus with a practical byzantine recovery protocol [Castro and Liskov ’99 and ’00] • The servers serialize updates [OceanStore] or assign secure node Ids [Configuration service] N N N103 N32 N N60

A more decentralized solution:weak secure node IDs • ID = SHA-1 (IP-address node) • Assumption: attacker controls limited IP addresses • Before using a node, challenge it to verify its ID

Using weak secure node IDS • Detect malicious nodes • Define verifiable system properties • Each node has a successor • Data is stored at its successor • Allow querier to observe lookup progress • Each hop should bring the query closer • Cross check routing tables with random queries • Recovery: assume limited number of bad nodes • Quota per node ID

Summary http://project-iris.net