CONSTRUCTING AN ARITHMETIC LOGIC UNIT

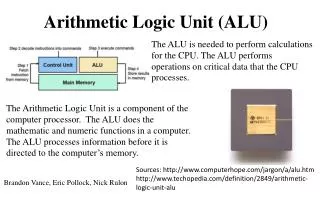

CHAPTER 4: PART II. CONSTRUCTING AN ARITHMETIC LOGIC UNIT. An ALU (arithmetic logic unit). Let's build a 32-bit ALU to support the and and or instructions we'll just build a 1 bit ALU, and use 32 of them One Implementation (Figure A): Use a mux with AND/OR gates

CONSTRUCTING AN ARITHMETIC LOGIC UNIT

E N D

Presentation Transcript

CHAPTER 4: PART II CONSTRUCTING AN ARITHMETIC LOGIC UNIT

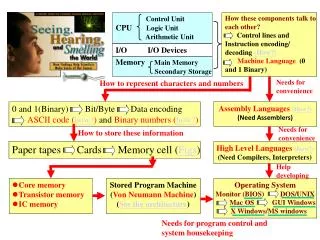

An ALU (arithmetic logic unit) Let's build a 32-bit ALU to support the and and or instructions we'll just build a 1 bit ALU, and use 32 of them One Implementation (Figure A): Use a mux with AND/OR gates Another possible Implementation (sum-of-products of formula) in Figure B: Why isn’t this a good solution? r0 r1 r2 r30 r31

Selects one of the inputs to be the output, based on a control input Lets build our ALU using a MUX: Review: The Multiplexor (mux) note: we call this a 4-input mux even though it has 6 inputs!

ALU Implementations • We will build a 1-bit ALU so some instructions from which we will build a 32 bit ALU for the instructions selected. • Let's first look at a 1-bit adder: • How could we build a 1-bit ALU for add, and, and or? • How could we build a 32-bit ALU? cout = a b + a cin + b cin sum = a xor b xor cin

1 bit ALU Result = a operation b 32 bit ALU a, b, Result are 32 bits each Operation CarryIn a ALU b Result CarryOut Building a 32 bit ALU

What about subtraction (a – b) ? • Two's complement approach: just negate b and add 1. • How do we negate? • A very clever solution:

Tailoring the ALU to the MIPS • Need to support the set-on-less-than instruction (slt) • remember: slt is an arithmetic instruction • produces a 1 if rs < rt and 0 otherwise • use subtraction: (a-b) < 0 implies a < b • Need to support test for equality (beq $t5, $t6, $t7) • use subtraction: (a-b) = 0 implies a = b

Supporting slt: set on less than • Can we figure out the idea? • We need 1 when the result of subtractingis negative. • That is we need a 1 in theleast significant bit and a 0 elsewherewhen the result of subtracting hasa 1 in its most significant bit. • Also 0’s in all bits when the result of subtracting has a 0 in its most significant bit.

Test for equality: beq • Notice control lines:000 = and001 = or010 = add110 = subtract111 = slt • a - b is 0 if all bitsin a - b are 0. That isin the result, r = a - bNOT (r31 OR r30 OR … r0) Note: zero is a 1 when the result is zero!

Conclusion • We can build an ALU to support the MIPS instruction set • key idea: use multiplexor to select the output we want • we can efficiently perform subtraction using two’s complement • we can replicate a 1-bit ALU to produce a 32-bit ALU • Important points about hardware • all of the gates are always working • the speed of a gate is affected by the number of inputs to the gate • the speed of a circuit is affected by the number of gates in series (on the “critical path” or the “deepest level of logic”) • Our primary focus: comprehension, however, • Clever changes to organization can improve performance (similar to using better algorithms in software) • we’ll look at two examples for addition and multiplication

Problem: ripple carry adder is slow • Is a 32-bit ALU as fast as a 1-bit ALU? • Is there more than one way to do addition? • two extremes: ripple carry and sum-of-products Can you see the ripple? How could you get rid of it? c1 = b0c0 + a0c0 +a0b0 c2 = b1c1 + a1c1 +a1b1 c2 = c3 = b2c2 + a2c2 +a2b2 c3 = c4 = b3c3 + a3c3 +a3b3 c4 = Not feasible! Why?

Carry-lookahead adder • An approach in-between our two extremes • Motivation: • If we didn't know the value of carry-in, what could we do? • When would we always generate a carry? gi = ai bi • When would we propagate the carry? pi = ai + bi • Did we get rid of the ripple? c1 = g0 + p0c0 c2 = g1 + p1c1 c2 = c3 = g2 + p2c2 c3 = c4 = g3 + p3c3 c4 =Feasible! Why?

Fast Carry, First Level of Abstraction: Propagate and Generate • ci+1 = (bi.ci) + (ai.ci) + (ai.bi) = (aibi) +(ai+bi)ci = gi + pici, where gi = ai bi, andpi = ai + bi. • c1 = g0 + p0c0c2 = g1 + p1c1 = g1 + p1g0 + p1p0c0c3 = g2 + p2c2 = g2 + p2g1 + p2p1g0 + p2p1p0c0c4 = g3 + p3c3 = g3 + p3g2 + p3p2g1 + p3p2p1g0 + p3p2p1p0c0 • ci+1 is 1 if an earlier adder generates a carry and all intermediate adders propagate the carry. Plumbing analogy for First Level

Fast Carry, Second Level of Abstraction: Carry look ahead Plumbing analogy for Next Level Carry Look ahead signals P0, G0 • Super PropagateP0 = p3.p2p1.p0P1 = p7.p6p5.p4P2 = p11.p10p9.p8P3 = p15.p14p13.p12 • Super GenerateG0 = g3 + p3g2 + p3p2g1 + p3p2p1g0G1 = g7 + p7g6 + p7p6g5 + p7p6p5g4G2 = g11 + p11g10 + p11p10g9 + p11p10p9g8G3 = g15 + p15g14 + p15p14g13 + p15p14p13g12 • Generate final carryC1 = G0 + P0c0C2 = G1 + P1G0 + P1P0c0C3 = G2 + P2G1 + P2P1G0 + P2P1P0c0C4 = G3 + P3G2 + P3P2G1 + P3P2P1G0 + P3P2P1P0c0 = c16

Use principle to build bigger adders The 16 bit adder with carry look ahead

Multiplication • More complicated than addition • accomplished via shifting and addition • More time and more area • Let's look at 3 versions based on grade school algorithm 0010 (multiplicand)x_1011 (multiplier) • Negative numbers: convert and multiply • there are better techniques, we won’t look at them

Multiplication: Implementation: 1st Version • Multiplier = 32 bits, Multiplicand = 64 bitsProduct = 64 bits. • Initially Product is 64 bit Zero

Multiplication: Implementation: 1st Version Problem with 1st Version: 64 bit arithmetic, Half of multiplicand = 0!

Multiplication: Implementation: 2nd Version • Multiplier = 32 bits, Multiplicand = 32 bitsProduct = 64 bits. • Product is 64 bit Zero

Multiplication: Implementation: 2nd Version Problem with 2nd Version: Half of product is always = 0!

Multiplication: Implementation: Final Version • Multiplier = 32 bits, Multiplicand = 32 bitsProduct = 64 bits. • Initially add Multiplier to Right half of Producti.e LeftProduct = 0, rightProduct = Multiplier

Floating Point (a brief look) • We need a way to represent • numbers with fractions, e.g., 3.1416 • very small numbers, e.g., .000000001 • very large numbers, e.g., 3.15576 ´ 109 • Representation: • sign, exponent, significand: (–1)sign´ significand ´ 2exponent • more bits for significand gives more accuracy • more bits for exponent increases range • IEEE 754 floating point standard: • single precision [1 word]: 8 bit exponent, 23 bit significand • double precision [2 words] : 11 bit exponent, 52 bit significand

IEEE 754 floating-point standard • Leading “1” bit of significand is implicit • Exponent is “biased” to make sorting easier • all 0s is smallest exponent all 1s is largest • bias of 127 for single precision and 1023 for double precision • summary: (–1)sign´ (1+significand) ´ 2exponent – bias • Example 1: • decimal: -.75 = -3/4 = -3/22 • binary: -.11 = -1.1 x 2-1 • floating point: exponent = bias + -1 = 127 - 1 = 126 = 01111110 • IEEE single precision: 1 01111110 10000000000000000000000 • Example 2: • decimal: 0.356 • binary: 0.01011011001 (can be more accurate) = 1.011011001 x 2-2 • floating point: exponent = 127 – 2 = 125 = 01111101 • IEEE single precision: 0 01111101 01101100100000000000000

Floating Point Complexities • Operations are somewhat more complicated (see text) • In addition to overflow we can have “underflow” • Accuracy can be a big problem • IEEE 754 keeps two extra bits, guard and round • four rounding modes • positive divided by zero yields “infinity” • zero divide by zero yields “not a number” • other complexities • Implementing the standard can be tricky • Not using the standard can be even worse • see text for description of 80x86 and Pentium bug!

Chapter Four Summary • Numbers can be represented as bits. • Computer arithmetic is constrained by limited precision • Bit patterns have no inherent meaning but standards do exist • two’s complement • IEEE 754 floating point • Computer instructions determine “meaning” of the bit patterns • Performance and accuracy are important so there are many complexities in real machines (i.e., algorithms and implementation). • Arithmetic Algorithms have been presented. • Logical representation of hardware to do some arithmetic operations have been given. • We are ready to move on (and implement the datapath = ALU, registers).