Network Awareness: Understanding and Predicting Network Behavior

Learn about network awareness, including its ability to sense and describe the network environment, answer questions about network behavior, and its implementation through Bayesian networks. Explore the benefits of Bayesian network awareness and its success stories in various industries. Gain insights into autonomics, autognostics, and the concept of proprioception in network awareness. Discover how network awareness can be integrated into supercomputing for improved performance.

Network Awareness: Understanding and Predicting Network Behavior

E N D

Presentation Transcript

BoF Rm A10/A11 Bayesian Network Awareness Bill Rutherford Loki Jorgenson Marius Vilcu

Awareness … ??? Main engine failed to fire

Awareness Success Stories • Electrical power grid load prediction • User analysis • trend modeling - TV schedules – spike prediction • Weather prediction • Sensor correlation • trend modeling – storm prediction • Internet prediction • Weather maps • trend modeling – build out prediction

Proprioceptive Concept • Sense of relative disposition of body • Self meta-view of body status and dynamics wrt look ahead context • Situation awareness – nerve system • Self awareness – pain emphasis !!!

What is Network Awareness? • “The ability to sense the network environment and construct a state description” • Peysakhov et al., 2004 • “The capability of network devices and applications to be aware of network characteristics” • Cheng, 2002

What is Network Awareness? • “The ability to answer questions quickly and accurately about network behavior” • Hughes & Somayaji, 2005 • “Realizing that each component of the network affects every other one: The people, packets, machines, subnets, sessions, transactions, traffic, and any movement on the network on all layers” • Bednarczyk et al., Black Hat Consulting whitepaper

Some Terminology - Autonomics • Autonomics: full self care • Healing • Configuring • Protecting • Optimizing

Autognostics • Autognostics: key player in autonomics • Capacity for networks to be self-aware • Adapt to applications • Autonomously • Monitoring • Identifying • Diagnosing • Resolving issues • Verifying and reporting

Autognostics Benefits • Provides autonomics with basis for response and validation • Supports self: • awareness • discovery • healing • optimization

Why Bayesian Network Awareness? • One possible implementation of several characteristics of Autonomic Networking • Combines Bayesian theory with Neural Networks (Bayesian - proven technology- success stories) • Calculates the probability of certain activities that may happen within a computer network

Bayesian Success Stories • Add probabilities to expert systems • NASA Mission Control • Vista System • Email • Spam Assassin • Office Assistant • paperclip guy

Belief Network • What we "believe" is happening • What our beliefs are based on • How we might find out if not true • Example of a belief network • NASA Mission Control – all ok ? • Use of belief networks • Probability of problems – abort ?

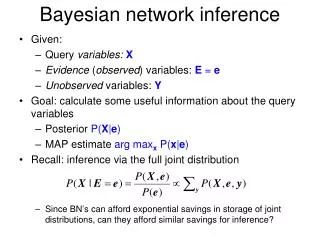

Bayesian Belief Network? • Powerful knowledge representation and reasoning tool under conditions of uncertainty. • Recently significant progress has been made in the area of probabilistic inference on belief networks. • Many belief network construction algorithms have been developed. • Defined by DAG and CPT

Bayesian Network - DAG • Directed acyclic graph (DAG) with a conditional probability distribution for each node • Bayesian Network uses DAG to represent dependency relationships between variables • DAG structure of such networks contains: • nodes representing domain variables • arcs between nodes representing probabilistic dependencies.

DAG Example Node packetCollisions packetDrops packetBlackHoles packetMalforms Arc or Edge packetLoss

Bayesian Network - CPT • Second component consists of one conditional probability table (CPT) for each node • Nodes represent network activity • Activity can be ranked into ranges • Low – Medium - High • Activity baseline can be learned in an ongoing manner

BNA: Concept 1 • Filter out everything normal on an ongoing basis so we can see only what is really not normal (pain) with minimal false positives • Concentrate design effort on the most critical components • monitor stack distributed event reconstruction • sensor node architecture

HTTP DNS SMTP Telnet UDP Analyzer TCP Analyzer IP Analyzer Monitor Stack Concept Vector of Scalars Sensor Nodes Packet Stream

BNA: Concept 2 • Feed forward adaptation – live data • Enhance granularity for hot issues • Use effectors for proactive intervention • Validate intervention-outcome relation • Self supervision • Growth-Prune model • right size to context

BNA: Concept 3 • Implement proprioceptive concept • Proprioceptive meta-view • Abnormal activity (pain) reactivity • Conditioning - look ahead context • Generalize to novel data • Learn how to adapt

SupercomputingConcept • Integrate BNA into compute, storage, visualization infrastructure • Learn particular eccentricities • apps … network events • distributed context • Similar to NASA

Sensor Nodes • Data input nodes of neural network • Sensor nodes receive monitor stack output • Statistics by category – metadata structure • Stream event summaries • Special protocol events • Distributed event correlations • Usually accept a vector of scalars • Each scalar has an associated weight which can be adjusted by learning

Known Issues/Challenges Scalability - computationally extremely expensive Bayesian inference relies on degrees of belief not an objective method of induction Generalization to novel data is problematic Bayesian Network maintenance over time The construction of belief networks remains a time consuming task overall

Perceived BNA Potential what scalars have meaning wrt BNA key indicators how change network then validate what effectors how validate how interface to operators analysis/decision - output

Probability of Engine Failure 1 • Keneth H. Knuth, Intelligent Systems Division, NASA • Bayes Theorem as a learning rule • tells us how to update our prior knowledge when we receive new data • p(model|data, I) is called the posterior probability • what we learned from data combined with our prior knowledge • I represents prior information

Probability of Engine Failure 2 • Keneth H. Knuth, Intelligent Systems Division, NASA • p(model|I) is called the prior probability • describes the degree to which we believe a specific model is the correct description before we see any data • p(data|model, I) is called the likelihood • describes the degree to which we believe that the model could have produced the currently observed data. • denominator p(data|I) is called the evidence

Strategies for Sensor Nodes 1 • Use sparse matrix approach • Minimal number for what is important • Reduce overall network complexity • Disable nodes that are not contributing • Minimize number of edges in DAG • Minimize CPT size

Strategies for Sensor Nodes 2 • Growing sensor based neural gas • Use self organizing map (SOM) • right size SOM growth • SOM/Sensor growth/prune model • Self organizing overlay network • Self determined connection model • Top level handoff to Bayesian nodes • node model • commonalities • Inherit behaviour

What is a Monitor Stack 1? • typical link packed with heterogeneous traffic - sources of variety • Packets from different hosts, particular conversations - applications • Some carrying asynchronous unidirectional communiqués with no acknowledgement • Each packet has series of headers • direct the operation of protocols

What is a Monitor Stack 2? • Pace and duration of connections is variable • Automated transactions such as name server and HTTP requests often take less than a second • Login sessions operated directly by humans may persist for hours • Payloads carried vary remarkably between applications. • Some hold exactingly formatted text, others encode manual edits of users at keyboards • Others send binary data in large blocks

What is a Monitor Stack 3? • Monitor stack uses protocol analyzers to reduce and segregate traffic • Packets produced by layered protocols so monitors similarly layered • statistics about lower protocol layers • reconstruction of data streams

Similar Solutions • Netuitive’s Real Time Analysis Engine • Continuously analyzes and correlates various performance variables, such as CPU utilization, memory consumption, thread allocation, traffic volume • Uses self-learning correlation and regression analysis algorithms to self-discover and study the relationships among variables • Automatically determines normal system behavior and predicts abnormalities, and triggers alarms