Dynamic Bayesian Network

Dynamic Bayesian Network. Fuzzy Systems Lifelog management. Outline. Introduction Definition Representation Inference Learning Comparison Summary. C. A. B. A. B. D. Brief Review of Bayesian Networks. Graphical representations of joint distributions:.

Dynamic Bayesian Network

E N D

Presentation Transcript

Dynamic Bayesian Network Fuzzy SystemsLifelog management

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

C A B A B D Brief Review of Bayesian Networks • Graphical representations of joint distributions: Static world, each random variable has a single fixed value. Mathematical formula used for calculating conditional probabilities. Develop by the mathematician and theologian Thomas Bayes (published in 1763)

Introduction • Dynamic system • Sequential data modeling (part of speech) • Time series modeling (activity recognition) • Classic approaches • Linear models: ARIMA (autoregressive integrated moving average), ARMAX (autoregressive moving average exogenous variables model) • Nonlinear models: neural networks, decision trees • Problems • Prediction of the future based on only a finite window • Difficult to incorporate prior knowledge • Difficult to deal with multi-dimensional inputs and/or outputs • Recent approaches • Hidden Markov models (HMMs): discrete random variable • Kalman filter models (KFMs): continuous state variables • Dynamic Bayesian networks (DBNs)

Transportation Mode: Walking, Running, Car, Bus True velocity and location Observed location Motivation Time = t Mt Xt Ot Time = t+1 Mt+1 Need conditional probability distributions e.g. a distribution on (velocity, location) given the transportation mode Prior knowledge or learned from data Given a sequence of observations (Ot), find the most likely Mt’s that explain it. Or could provide a probability distribution on the possible Mt’s. Xt+1 Ot+1

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

frame i-1 frame i frame i+1 C C C A A B B A B D D D Dynamic Bayesian Networks • BNs consisting of a structure that repeats an indefinite (or dynamic) number of times • Time-invariant: the term ‘dynamic’ means that we are modeling a dynamic model, not that the networks change over time • General form of HMMs and KFLs by representing the hidden and observed state in terms of state variables of complex interdependencies

Formal Definition • Defined as • : a directed, acyclic graph of starting nodes (initial probability distribution) • : a directed, acyclic graph of transition nodes (transition probabilities between time slices) • : starting vectors of observable as well as hidden random variable • : transition matrices regarding observable as well as hidden random variables

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

Representation (1): Problem • Target: Is it raining today? • Necessity to specify an unbounded number of conditional probability table, one for each variable in each slice • Each one might involve an unbounded number of parents next step: specify dependencies among the variables.

Representation (2): Solution • Assume that change in the world state are caused by a stationary process (unmoving process over time) • Use Markov assumption - The current state depends on only in a finite history of previous states. Using the first-order Markov process: • In addition to restricting the parents of the state variable Xt, we must restrict the parents of the evidence variable Et is the same for all t Transition Model Sensor Model

. . . . . . Wi-1 Wi Wi+1 . . . . . . Wi-1 Wi Wi+1 Representation: Extension • There are two possible fixes if the approximation is too inaccurate: • Increasing the order of the Markov process model. For example, adding as a parent of , which might give slightly more accurate predictions • Increasing the set of state variables. For example, adding to allow to incorporate historical records of rainy seasons, or adding , and to allow to use a physical model of rainy conditions • Bigram • Trigram

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

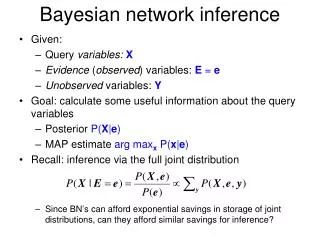

Inference: Overview • To infer the hidden states X given the observations Y1:t • Extend HMM and KFM’s / call BN inference algorithms as subroutines • NP-hard problem • Inference tasks • Filtering(monitoring): recursively estimate the belief state using Bayes’ rule • Predict: computing P(Xt| y1:t-1 ) • Updating: computing P(Xt | y1:t ) • Throw away the old belief state once we have computed the prediction(“rollup”) • Smoothing: estimate the state of the past, given all the evidence up to the current time • Fixed-lag smoothing(hindsight): computing P(Xt-1 | y1:t ) where l > 0 is the lag • Prediction: predict the future • Lookahead: computing P(Xt+h | y1:t) where h > 0 is how far we want to look ahead • Viterbi decoding: compute the most likely sequence of hidden states given the data • MPE(abduction): x*1:t = argmax P(x1:t | y1:t )

Inference: Comparison • Filtering: r = t • Smoothing: r > t • Prediction: r < t • Viterbi: MPE

Inference: Filtering • Compute the belief state - the posterior distribution over the current state, given all evidence to date • Filtering is what a rational agent needs to do in order to keep track of the current state so that the rational decisions can be made • Given the results of filtering up to time t, one can easily compute the result for t+1 from the new evidence (for some function f) (dividing up the evidence) (using Bayes’ Theorem) (by the Marcov propertyof evidence) α is a normalizing constant used to make probabilities sum up to 1

Inference: Filtering • Illustration for two steps in the Umbrella example: • On day 1, the umbrella appears so U1=true • The prediction from t=0 to t=1 is and updating it with the evidence for t=1 gives • On day 2, the umbrella appears so U2=true • The prediction from t=1 to t=2 is and updating it with the evidence for t=2 gives

Inference: Smoothing • Compute the posterior distribution over the past state, given all evidence up to the present • Hindsight provides a better estimate of the state than was available at the time, because it incorporates more evidence for some k such that 0 ≤ k < t.

Inference: Prediction • Compute the posterior distribution over the future state, given all evidence to date • The task of prediction can be seen simply as filtering without the addition of new evidence for some k>0

Inference: Most Likely Explanation (MLE) • Compute the sequence of states that is most likely to have generated a given sequence of observation • Algorithms for this task are useful in many applications, including speech recognition • There exist a recursive relationship between the most likely paths to each state Xt+1 and the most likely paths to each state Xt. This relationship can be write as an equation connecting the probabilities of the paths:

Inference: Algorithms • Exact Inference algorithms • Forwards-backwards smoothing algorithm (on any discrete-state DBN) • The frontier algorithm (sweep a Markov blanket, the frontier set F, across the DBN, first forwards and then backwards) • The interface algorithm (use only the set of nodes with outgoing arcs to the next time slice to d-separate the past from the future) • Kalman filtering and smoothing • Approximate algorithms: • The Boyen-Koller (BK) algorithm (approximate the joint distribution over the interface as a product of marginals) • Factored frontier (FF) algorithm / Loopy propagation algorithm (LBP) • Kalman filtering and smoother • Stochastic sampling algorithm: • Importance sampling or MCMC (offline inference) • Particle filtering (PF) (online)

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

Learning (1) • The techniques for learning DBN are mostly straightforward extensions of the techniques for learning BNs • Parameter learning • The transition model P(Xt | Xt-1) / The observation model P(Yt | Xt) • Offline learning • Parameters must be tied across time-slices • The initial state of the dynamic system can be learned independently of the transition matrix • Online learning • Add the parameters to the state space and then do online inference (filtering) • The usual criterion is maximum-likelihood(ML) • The goal of parameter learning is to compute • θ*ML = argmaxθP( Y| θ) = argmaxθlog P( Y| θ) • θ*MAP = argmaxθlog P( Y| θ) + logP(θ) • Two standard approaches: gradient ascent and EM(Expectation Maximization)

Learning (2) • Structure learning • The intra-slice connectivity must be a DAG • Learning the inter-slice connectivity is equivalent to the variable selection problem, since for each node in slice t, we must choose its parents from slice t-1. • Learning for DBNs reduces to feature selection if we assume the intra-slice connections are fixed

Outline • Introduction • Definition • Representation • Inference • Learning • Comparison • Summary

Comparison (HMM: Hidden Markov Model) • Structure • One discrete hidden node (X: hidden variables) • One discrete or continuous observed node per time slice (Y: observations) • Parameters • The initial state distribution P( X1 ) • The transition model P( Xt | Xt-1 ) • The observation model P( Yt | Xt ) • Features • A discrete state variable with arbitrary dynamics and arbitrary measurements • Structures and parameters remain same over time X1 X2 X3 X4 Y1 Y2 Y3 Y4

frame i-1 frame i frame i+1 .7 .8 1 Qi-1 Qi+1 Qi .3 .2 . . . . . . P(qi|qi-1) 3 P(obsi | qi) 1 2 obsi-1 obsi+1 obsi qi 1 2 3 qi-1 q=1 1 .7 .3 0 obs q=2 2 0 .8 .2 obs obs q=3 3 0 0 1 = variable = state = allowed dependency = allowed transition Comparison with HMMs • HMMs • DBNs

Comparison (KFL: Kalman Filter Model) • KFL has the same topology as an HMM • All the nodes are assumed to have linear-Gaussian distributions • x(t+1) = F*x(t) + w(t), • w ~ N(0, Q) : process noise, x(0) ~ N(X(0), V(0)) • y(t) = H*x(t) + v(t), • v ~ N(0, R) : measurement noise • Features • A continuous state variable with linear-Gaussian dynamics and measurements • Also known as Linear Dynamic Systems(LDSs) • A partially observed stochastic process • With linear dynamics and linear observations: f( a + b) = f(a) + f(b) • Both subject to Gaussian noise X1 X2 Y1 Y2

Comparison with HMM and KFM • DBN represents the hidden state in terms of a set of random variables • HMM’s state space consists of a single random variable • DBN allows arbitrary CPDs • KFM requires all the CPDs to be linear-Gaussian • DBN allows much more general graph structures • HMMs and KFMs have a restricted topology • DBN generalizes HMM and KFM (more expressive power)

Summary • DBN: a Bayesian network with a temporal probability model • Complexity in DBNs • Inference • Structure learning • Comparison with other methods • HMMs: discrete variables • KFMs: continuous variables • Discussion • Why to use DBNs instead of HMMs or KFMs? • Why to use DBNs instead of BNs?