Bayesian Network Classifier

Bayesian Network Classifier. Not so naïve any more or Bringing causality into the equation. Review. Before covering Bayesian Belief Networks…. A little review of the Naïve Bayesian Classifier. Approach.

Bayesian Network Classifier

E N D

Presentation Transcript

Bayesian Network Classifier Not so naïve any more or Bringing causality into the equation

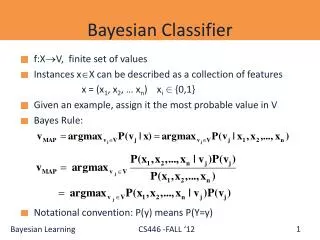

Review • Before covering Bayesian Belief Networks… A little review of the Naïve Bayesian Classifier Bayes Network

Approach • Looks at the probability that an instance belongs to each class given its value in each of its dimensions P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P P Bayes Network

Example: Redness • If one of the dimensions was “redness” For a given redness value which is the most probable fruit Bayes Network

Bayes Theorem • Above from the book • h is hypothesis, D is training Data Bayes Network

If Non-Parametric… • 2506 apples • 2486 oranges • Probability that redness would be 4.05 if know an apple • About 10/2506 • P(apple)? • 2506/(2506+2486) • P(redness=4.05) • About (10+25)/(2506+2486) ? Bayes Network

Bayes • I think of the ratio of P(h) to P(D) as an adjustment to the easily determined P(D|h) in order to account for differences in sample size Posterior Probability Prior Probabilities or Priors Bayes Network

Naïve Bayes Classifier • The Naïve term comes from… • Where vj is class and ai is an attribute • Derivation Bayes Network

Can remove the naïveness • Go with covariance matrix instead of standard deviation Bayes Network

Solution Bayes Network

Lot of work • Need Bayes rule • Instead of simply multiplying each dimensional probability… • Must compute a multivariate covariance matrix (num dim x num dim) • Calculate multivariate PDF and all priors • Includes getting inverse of covariance matrix • Only useful if covariance is strong and predictive component of the data Bayes Network

Other ways of removing naiveté • Sometimes useful to infer causal relationships Causes Dim x Dim y Bayes Network

If can figure out… • …causal relationship between dimensions no longer independent • Conditional • In terms of bins? Causes Dim x Dim y Bayes Network

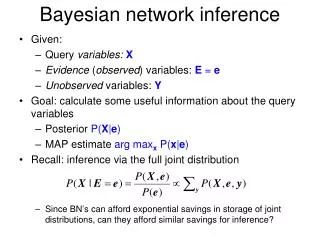

Problem • Determine a dependency network from the data • Use the dependencies to determine probabilities that an instance is a given class • Use those probabilities to classify Dim 4 Dim 2 Dim 1 Dim 3 Dim 5 Bayes Network

DAG Directed acyclic graph Used to represent a dependency network Known as a Bayesian Belief Network Structure Dim 4 Dim 2 Dim 1 Dim 3 Dim 5 Bayes Network

Algorithm Not in book: 39 page paper A Bayesian Method for the Induction of Probabilistic networks from Data Known as the K2 algorithm Bayes Network

Similar to Decision Tree Each node is a dimension Instead of representing a decision it represents a conditional relationship Algorithm for selecting nodes is greedy Dim 4 Dim 2 Dim 1 Dim 3 Dim 5 Quote from paper: “The algorithm is named K2 because it evolved from a system named Kutató(Herskovits & Cooper, 1990) that applies the same greedy-search heuristics. As we discuss in section 6.1.3, Kutatóuses entropy to score network structures.” Bayes Network

General Approach Determine the no-parent score for a given node (dimension) For each remaining dimension (node) Determine the probability (score) that each dimension is the parent for the given node(does a dependency appear to exist) Compare the score of the best to the no-parent score If better, keep as parent and repeat (see if can add another parent) Otherwise done Greedy Find the parents of each node Find best parent If improve, keep Find next “best parent” Bayes Network

How Score? The probability that a given data “configuration” could belong to a given DAG Do records with a given value in one dimension tend to have a specific value in another dimension Storm BusTourGroup Lightning Campfire Example from Book Thunder ForestFire Bayes Network

Bayesian Belief Network BS Belief Network Structure How probable? Don’t panic! We’ll get through it Bayes Network

Proof Last 5 pages in the paper (39 pages total) A Bayesian Method for the Induction of Probabilistic networks from Data Bayes Network

Bayesian Belief Network BS Belief Network Structure How probable? • n=num dims (nodes) • q=num unique instantiations of parents • If one parent, q = number of distinct vals in parent • If two parents, q = num in p1*num in p2 • r=num distinct vals possible in dimension • Nijk=number of records with value = k in current dim that match parental instantiation • Nij=Number of records that match parental instantiation • (sum of Nijk’s) Bayes Network

Intuition Think of as a random match probability What are the chances that the values seen in a dimension (for records that match the parental instantiation) could occur randomly? Think of the as an adjustment upward (since it will show up in the numerator) indicating how the data is actually organized How organized is the data in the child dimension? Example 6!0! is 720 while 3!3! is 36 Sound familiar? Bayes Network

Algorithm Greedy algorithm for finding parents For a given dimension Check no parent probability, store in Pold Then choose parent that maximizes g If that probability is greater than Pold, add to list of parents, update Pold Keep adding till can’t increase probability Bayes Network

No Parent Probability? There is only one “instantiation” No parent filtering, so Nij is all training samples Nijkis number in training set where current dimension is value vj Orphan Bayes Network

Example from paper Three nodes Two instantiations for parent X2 (of child X3) Parent X2 has value absent Parent X2 has value present Two instantiations for parent X1 Parent X1 has value absent Parent X1 has value present Bayes Network

X2 instantiation with val absent Number of X3 absents that were X2 absents: 4 Number of X3 presents that were X2 absents: 1 X2instantion: val present X3 absent|X2 present: 0 X3present|X2present: 5 Some numbers Bayes Network

For dimension (i) 3 X3 Calculations Bayes Network

X1 instantiation with val absent Number of X2 absents that were X1 absents: 4 Number of X2 presents that were X1 absents: 1 X1instantion: val present X2 absent|X1present: 1 X2 present|X1present: 4 Some more numbers Bayes Network

For dimension (i) 2 X2 Calculations Bayes Network

Dimension 1 has no parents Number of X1 absents: 5 Number of X1 presents: 5 Some more numbers Bayes Network

For dimension (i) 1 Xi Calculations Bayes Network

The whole enchilada The article calls this BS1 Putting it all Together X1 X2 X3 X1 X2 X3 Bayes Network

Comparing networks Article compares BS1 to BS2 X2 X1 X3 Assume that P(BS1)=P(BS2) S1 ten times more probable than S2 Bayes Network

Remember Not calculating whole tree Just a set of parents for a single node No need for first product Bayes Network

Result We get a list of most likely parents for each node Storm BusTourGroup Lightning Campfire Thunder ForestFire Bayes Network

1. procedure K2; 2, {Input: A set of n nodes, an ordering on the nodes, an upper bound u on the 3. number of parents a node may have, and a database D containing m cases. } 4. {Output: For each node, a printout of the parents of the node.} 5. For i := 1 to n Do 6. πi: =∅; 7. Pold := g(i, πi); {This function is computed using equation (12).} 8. OKToProceed := true 9. while OKToProceed and |πi| < u do 10. let z be the node in Pred(xi) - πi that maximizes g(i, πi U {z}); 11. Pnew := g(i, πi U {z}); 12. if Pnew > Pold then 13. Pold := Pnew; 14. πi := πi U {z} !5. else OKToProceed := false; 16. end {while}; 17. write('Node:', xi, 'Parents of this node:; πi) 18. end {for}; 19. end {K2}; K2 algorithm (more formally) The algorithm is named K2 because it evolved from a system named Kutató(Herskovits & Cooper, 1990) that applies the same greedy-search heuristics. As we discuss in section 6.1.3, Kutatóuses entropy to score network structures. Bayes Network

The “g” function Function g(i,set of parents){ Set score = 1 If set of parents is empty Nij is the size of entire training set and Sv is entire training set Score *= For each child instantiation (e.g. 0 and 1) Get count of training record subset items (in Sv) That will be Nijk (in the case of two instantiations there will be two Nijk’s, and ri = 2) Score *= Else Get parental instantiations (e.g. 00,01,10,11) For each parental instantiation Get the training records that match (Sv) Size of that set is Nij Score *= For each child instantiation (e.g. 0 and 1) Get count of training record subset items (in Sv) That will be Nijk (in the case of two instantiations there will be two Nijk’s, and ri = 2) Score *= Return Score } Bayes Network

Implementation Straightforward Pred(i): returns all nodes that come before I in ordering g: our formula Bayes Network

How get instantiations If no parents Work with all records in training set to accumulate counts for current dimension No-parent approach Bayes Network

How get instantiations With parents My first attempt What’s wrong with this? • For each parent • For each possible value in that parent’s dimension • Accumulate values • End for • End for Bayes Network

Instantiations Have to know which values to use for every parent when accumulating counts Must get all instantiations first parents: 0 2 3 4 instantiations 0 0 0 0 0 0 0 1 0 0 1 0 0 0 1 1 0 1 0 0 0 1 0 1 0 1 1 0 0 1 1 1 1 0 0 0 1 0 0 1 1 0 1 0 1 0 1 1 1 1 0 0 1 1 0 1 1 1 1 0 1 1 1 1 • For each instantiation • Compute first portion of numerator log((ri – 1)!) • For each possible value in the current dim • Get counts that match instantiation and val in current dim • Update a sum of counts (for Nij) • Update sum of log factorials (for Nijk’s) • End for • Add sum of log factorials to original numerator • Compute denominator log(Nij + ri -1)!) • Subtract from numerator • End for Bayes Network

But how… How generate the instantiations? What if had different number’s of legal values in each dimension? I generated an increment function parents: 0 2 3 4 instantiations 0 0 0 0 0 0 0 1 0 0 1 0 0 0 1 1 0 1 0 0 0 1 0 1 0 1 1 0 0 1 1 1 1 0 0 0 1 0 0 1 1 0 1 0 1 0 1 1 1 1 0 0 1 1 0 1 1 1 1 0 1 1 1 1 Bayes Network

what’s this Ordering Nonsense? It’s got to be a DAG (Acyclic) The algorithm ensures this with an ordering An assumed order Pred(i) returns all nodes that occur earlier in the ordering An ordering on the nodes… Bayes Network

How get around order issue? Paper gives a couple of suggestions Could randomly shuffle order Do several times Take best scoring network Perhaps whole different approach to generating network Start with fully connected Remove edge that increases the P(BS) the most Continue until can’t increase Use whichever is better (random or reverse) Random Backwards Bayes Network

Even with ordering … Number of possible structures grows exponentially Paper states Even with an ordering constraint there are networks Once again binary membership (1 means edge is part of graph, 0 not) All unidirectional edges: Think distance matrix (from row to column) From Descartes Book states Exact inference of probabilities in general for an arbitrary Bayesian network is known to be NP-hard (guess who… Cooper 1990) NP-hard Bayes Network

is one over the count of all Bayesian Belief Structures (BS’s ) That’s a lot of networks and is at the heart of the derivation P(BS) Bayes Network

How do we know the order? A priori, no knowledge of network structure Storm BusTourGroup Lightning Campfire Thunder ForestFire Bayes Network

Bigger example What if have a thousand training When determining first Pold (no parents) What will Nij be? How get around? Perl presents 1000! as “inf” Bayes Network

Paper discussed in time complexity section Switching to log values Could add and subtract instead of multiply and divide (faster) They even pre-calculated every log-factorial value Up to the number of training values plus the maximum number of distinct values Approach that helped time complexity also helps in managing extremely large numbers Bayes Network