Bayesian Network

Bayesian Network. By DengKe Dong. Key Points Today. Intro to Graphical Model Conditional Independence Intro to Bayesian Network Reasoning BN: D-Separation Inference In Bayesian Network Belief Propagation Learning in Bayesian Network. Intro to Graphical Model.

Bayesian Network

E N D

Presentation Transcript

Bayesian Network By DengKe Dong

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Intro to Graphical Model • Two types of graphical models: -- Directed graphs (aka Bayesian Networks) -- Undirected graphs (aka Markov Random Fields) • Graphical Structure Plus associated parameters define joint probability distribution over of variables / nodes

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Conditional Independence • Definition X is conditionally independent of Y given Z, if the probability distribution governing X is independent of the value of Y, given the value of Z we denote it as: P (X ⊥ Y | Z), if

Conditional Independence • Condition on its parents A conditional probability distribution (CPD) is associated with each node N, defining as: P(N | Parents(N)) where the function Parents(N) returns the set of N’s immediate parents

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Bayesian Network Definition • Definition: A directed acyclic graph defining a joint probability distribution over a set of variables, where each node denotes a random variable, and each edge denotes the dependence between the connected nodes. • for example:

Bayesian Network Definition • Conditional Independencies in Bayesian Network: Each node is conditionally independent of its non-descendents, given only its immediate parents, So, the joint distribution over all variables in the network is defined in terms of these CPD’s, plus the graph.

Bayesian Network Definition • example: • Chain rules for Probability: P(S,L,R,T,W) = P(S)P(L|S)P(R|S,L)P(T|S,L,R)P(W|S,L,R,T) • CPD for each node Xi describing as P(Xi | Pa(Xi)): P(S,L,R,T,W) = P(S)P(L|S)P(R|S)P(T|L)P(W|L,R) • So, in a Bayes net

Bayesian Network Definition • Construction • Choose an ordering over variables, e.g. X1, X2, …, Xn • For i=1 to n • Add Xi to the network • Select parents Pa(Xi) as minimal subset of X1…Xi-1, such that Notice this choice of parents assures

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Reasoning BN: D-Separation • Conditional Independence, Revisited • We said: -- Each node is conditionally independent of its non-descendents, given its immediate parents • Does this rule given us all of the conditional independence relations implied by the Bayes Network? • NO • E.g., X1 and X4 are conditionally independent given {X2, X3} • But X1 and X4 not conditionally independent given X3 • For this, we need to understand D-separation …

Reasoning BN: D-Separation • Three example to understand D-Separation • Head to Tail • Tail to Tail • Head to Head

Reasoning BN: D-Separation • Head to Tail P(a,b,c) = P(a) P(c|a) P(b|c) • Given C P(a,b|c) = P(a,b,c) / P(c) = P(a|c) P(b|c) • Not Given C P(a,b|c) = not equal to P(a|c) P(b|c)

Reasoning BN: D-Separation • Tail to Tail P(a,b,c) = P(c) P(a|c) P(b|c) • Given C P(a,b|c) = P(a,b,c) / P(c) = P(a|c) P(b|c) • Not Given C P(a,b|c) = not equal to P(a|c) P(b|c)

Reasoning BN: D-Separation • Head to Head P(a,b,c) = P(c|a,b) P(a) P(b) • Given C P(a,b|c) = P(a,b,c) / P(c) not equal to P(a|c) P(b|c) • Not Given C P(a,b|c) = = P(a) P(b)

X and Y are conditionally independent given Z,if and only if X and Y are D-separated by Z • Suppose we have three sets of random variables: X, Y and Z • X and Y are D-separated by Z (and therefore conditionally independence, given Z) iff every path from any variable in X to any variable in Y is blocked • A path from variable A to variable B is blocked if it includes a node such that either • arrows on the path meet either head-to-tail or tail-to-tail at the node and this node is in Z • the arrows meet head-to-head at the node, and neither the node, nor any of its descendants, is in Z

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

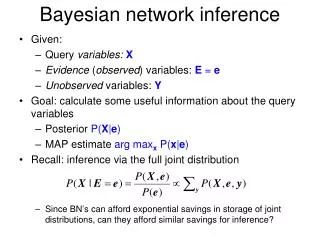

Inference In Bayesian Network • In general, intractable (NP-Complete) • For certain case, tractable • Assigning probability to fully observed set of variables • Or if just one variable unobserved • Or for singly connected graphs (ie., no undirected loops) • Variable elimination • Belief propagation • For multiply connected graphs • Junction tree • Sometimes use Monte Carlo method • Generate many samples according to the Bayes Net distribution, then count up the results • Variational methods for tractable approximate solutions

Inference In Bayesian Network • Prob. of Joint assignment: easy • Suppose we are interested in joint assignment <F=f,A=a,S=s,H=h,N=n> • What is P(f,a,s,h,n)?

Inference In Bayesian Network • Prob. of Joint assignment: easy • Suppose we are interested in joint assignment <F=f,A=a,S=s,H=h,N=n> • What is P(f,a,s,h,n)?

Inference In Bayesian Network • Prob. of marginals: not so easy • How do we calculate P(N = n) ?

Inference In Bayesian Network • Prob. of marginals: not so easy • How do we calculate P(N = n) ? P(N = n) = = =

Inference In Bayesian Network • Generating a sample from joint distribution: easy • How can we generate random samples according to P(F, A, S, H, N)? • Randomly draw a value for F=f draw r [0, 1] uniformly f r < , then output f=1, else f = 0 • Note we can estimate marginal like P(N=n) by generating many samples from joint distribution, by summing up the probability mass for which N = n • Similarly, for anything else we can care P(F=1 |H=1, N=0)

Inference On a Chain • Converting Directed to Undirected Graphs

Inference On a Chain • Converting Directed to Undirected Graphs

Inference On a Chain Compute the marginals

Inference On a Chain Compute the marginals

Inference On a Chain Compute the marginals

Inference On a Chain Compute the marginals

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Belief Propagation • 基于贝叶斯网络、MRF’s、因子图的置信传播算法已分别被开发出来,基于 不同模型的置信传播算法在数学上是等价的。从叙述简洁的角度考虑,这里 主要介绍基于MRF’s的标准置信传播算法。

Belief Propagation • 基于贝叶斯网络、MRF’s、因子图的置信传播算法已分别被开发出来,基于 不同模型的置信传播算法在数学上是等价的。从叙述简洁的角度考虑,这里 主要介绍基于MRF’s的标准置信传播算法 • 以马尔可夫随机场为例 给定某个观察得到的状态,推断其对应的潜在状态

Belief Propagation • 基于贝叶斯网络、MRF’s、因子图的置信传播算法已分别被开发出来,基于 不同模型的置信传播算法在数学上是等价的。从叙述简洁的角度考虑,这里 主要介绍基于MRF’s的标准置信传播算法 • 以马尔可夫随机场为例 给定某个观察得到的状态推断其对应的潜在状态

Belief Propagation • 引入变量表示隐状态结点i传递给隐状态结点j的信息(message) • 表明了结点j应该处于何种状态 • 的维度与相同,的每一维表明结点i认为结点j处于相应 的状态的可能

Belief Propagation • Belief(置信度): 我们近似计算得到的边缘概率成为belief,并将结点i的置信度表示为 b() 那么结点i的belief为 eq(1) 其中 k 为归一化因子,保证所有置信度之和为1,N(i) 表示结点i的所有相邻 结点。置信传播的信息由公式 eq(2) 更新,该公式能够保证信息的一致性 eq(2) 公式(2)中除了由结点j传递给结点i的信息,其他所有传递给结点i的信息都 被连乘起来;另外公式中的求和符号表示将结点i的所有可能状态累加起来。

Belief Propagation • 在实际计算中,belief从图边缘的结点开始计算,并且只 更新所有必需信息都已知的信息。利用公式1和公式2, 依次计算每个结点的belief。 • 对于无环的MRF’s,通常情况下,每个信息只需计算一次 ,极大地提升了计算效率

Key Points Today • Intro to Graphical Model • Conditional Independence • Intro to Bayesian Network • Reasoning BN: D-Separation • Inference In Bayesian Network • Belief Propagation • Learning in Bayesian Network

Learning in Bayesian Network • What you should know