Nonlinear optical flow estimation

This undergraduate project, supervised by Dr. Ohad Ben Shahar and Mr. Yair Adato, tackles the challenge of estimating optical flow between consecutive frames in image sequences. Traditional methods, like the Horn & Schunck algorithm, rely on pyramidal representations which can omit crucial information. We explore alternatives using regular minimization techniques, such as Gradient Descent and Quasi-Newton methods. Our results indicate potential for efficient optical flow estimation without pyramids, offering significant improvements in computation time and accuracy.

Nonlinear optical flow estimation

E N D

Presentation Transcript

Department of Computer Science Ben-Gurion University Nonlinear optical flow estimation RotemKupfer & Alon Gat Under supervision of Dr. Ohad Ben Shahar & Mr. YairAdato Undergraduate Project Presentation Day May 2011

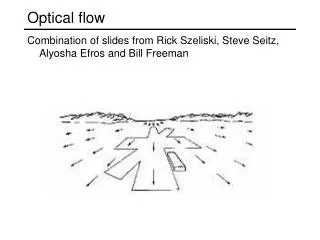

Problem definition: Given: Two or more frames of an image sequence. Wanted: Displacement field between two consecutive frames optical flow

Horn & Schunck Algorithm We want to find and that minimize the energy functional: Data term brightness constancy Smoothness term • Data term - Penalizes deviations from constancy assumptions. • Smoothness term - Penalizes dev. from smoothness of the solution. • Regularization parameter α - Determines the degree of smoothness. Output – the optical flow!

Problems with this method. • Minimizing using Euler-Lagrange doesn’t guarantee global minimum, so a Pyramid Method is enforced, which causes loss of vital information in some type of problems. • Main Goal: trying to find a method that wont need Pyramids.

So what we tried to do? • Instead of solving E-L equations (which need to be linearized) we wrote the Functional as a set of n*m equations and minimized it with regular minimization methods (Gradient decent, Quasi Newton, and others).

Mathemactica for symbolic calculations. Where P are the Image derivatives, and u, v is the optical flow components in the vertical and horizontal directions. Mathematica Symbolic Toolbox for MATLAB--Version 2.0 (http://library.wolfram.com/infocenter/MathSource/5344/)

Trying Polar Representation • The functional in polar coordinates: • , ,

MATLABfor numeric calculations • Quasi Newton IterationProblem: Calculation time of the inverse of non sparse matrix is long. O(n^3) -> resulting ((n*m)^3) • Gradient Decent.Advantage: Doesn’t require calculation of the Hessian matrix.

Leaving Mathematica • Translating the symbolic expression to numeric scheme: for i=2:imageSizeN-1 for j=2:imageSizeM-1 gradC(i,j)=gradC(i,j)+alpha*(2*(-c(i-1,j)+c(i,j))+2*(-c(i,j-1)+c(i,j))-2*alpha*(-c(i,j)+c(i,j+1)) - 2*alpha*(-c(i,j)+c(i+1,j))+4*exp((-1+c(i,j)^2+s(i,j)^2)^2)*c(i,j)*(-1+c(i,j)^2+s(i,j)^2) +2*(Ix(i,j)*m(i,j)+Ixz(i,j)*m(i,j)+2*Ixx(i,j)*c(i,j)*m(i,j)^2+Ixy(i,j)*m(i,j)^2*s(i,j))*(Iz(i,j)+Izz(i,j)+Ix(i,j)*c(i,j)*m(i,j)+Ixz(i,j)*c(i,j)*m(i,j)+Ixx(i,j)*c(i,j)^2*m(i,j)^2+Iy(i,j)*m(i,j)*s(i,j) +Iyz(i,j)*m(i,j)*s(i,j)+Ixy(i,j)*c(i,j)*m(i,j)^2*s(i,j)+Iyy(i,j)*m(i,j)^2*s(i,j)^2)); gradS(i,j)=gradS(i,j)+4*exp((-1+c(i,j)^2+s(i,j)^2)^2)*s(i,j)*(-1+c(i,j)^2+s(i,j)^2)+2*(Iy(i,j)*m(i,j)+Iyz(i,j)*m(i,j)+Ixy(i,j)*c(i,j)*m(i,j)^2+2*Iyy(i,j)*m(i,j)^2*s(i,j))*(Iz(i,j)+Izz(i,j) +Ix(i,j)*c(i,j)*m(i,j)+Ixz(i,j)*c(i,j)*m(i,j)+Ixx(i,j)*c(i,j)^2*m(i,j)^2+Iy(i,j)*m(i,j)*s(i,j)+Iyz(i,j)*m(i,j)*s(i,j)+Ixy(i,j)*c(i,j)*m(i,j)^2*s(i,j)+Iyy(i,j)*m(i,j)^2*s(i,j)^2) +alpha*(2*(-s(i-1,j)+s(i,j))+2*(-s(i,j-1)+s(i,j))-2*alpha*(-s(i,j)+s(i,j+1))-2*alpha*(-s(i,j)+s(i+1,j))); gradM(i,j)=gradM(i,j)+beta*(2*(-m(i-1,j)+m(i,j))+2*(-m(i,j-1)+m(i,j)))-2*beta*(-m(i,j)+m(i,j+1))-2*beta*(-m(i,j)+m(i+1,j))+2*(Ix(i,j)*c(i,j)+Ixz(i,j)*c(i,j) +2*(Ixx(i,j)*c(i,j)^2*m(i,j)+Iy(i,j)*s(i,j)+Iyz(i,j)*s(i,j)+2*Ixy(i,j)*c(i,j)*m(i,j)*s(i,j)+2*Iyy(i,j)*m(i,j)*s(i,j)^2)*(Iz(i,j)+Izz(i,j)+Ix(i,j)*c(i,j)*m(i,j)+Ixz(i,j)*c(i,j)*m(i,j) +Ixx(i,j)*c(i,j)^2*m(i,j)^2+Iy(i,j)*m(i,j)*s(i,j)+Iyz(i,j)*m(i,j)*s(i,j)+Ixy(i,j)*c(i,j)*m(i,j)^2*s(i,j)+Iyy(i,j)*m(i,j)^2*s(i,j)^2)); end end end

Optimization • Calculation time of 60-360 minutes! While high end algorithms run at around 20 secs.(CPU time complexity) • There are couple of parameters (alpha, number of iteration, with or without filters (texture decomposition and median filter)) that are needed to be set each run, and they are problem specific. (OUR time complexity) Resulting Big HEADACHE !

Optimization OPENMP – interface matlab OPENMP • So why use only one core when we have two or more? • The OpenMP Application Program Interface supports multi-platform shared-memory parallel programming in C/C++ on all architectures, OpenMP is a portable, scalable model that gives shared-memory parallel programmers a simple and flexible interface for developing parallel applications for platforms ranging from the desktop to the supercomputer. MATLAB MEX FILES (In C++) OPENMP http://openmp.org/

Optimization Condor to The Rescue • Condor is a specialized workload management system for compute-intensive jobs. • So instead of specifically trying to find the ‘best’ parameters, we just ‘tried them all’. • We used condor in order run multiple (100 and more) experiments in parallel. CONDOR VIEW http://www.cs.wisc.edu/condor/ It’s easyto use, very practical, it’s FREE and It’s installed only on EE Labs…

Some preliminary results – Cartesian functional: Starting from boundaries

Best Results NO pyramids, 1000 iterations 283 sec ~ 3mins! 6 pyramids, 1000 iterations 840secs

Conclusion and future direction • We have successfully ran experiments without the need for Pyramids (in “fair” play). • Gradient Decent uses normalized steps - very small step size, resulting |u| |v| that are small. • Using problem specific tools (like Condor and OPENMP) is HIGHLY recommended. (saves time, and time is money)

Questions? The Ground Truth.